AI in a Digital Vault: What Disconnected Sovereign Clouds Mean for Law Firms

When a client sends a barrister a bundle of confidential acquisition documents, the standard cloud is a liability. Disconnected sovereign clouds allow UK law firms to run powerful AI models on client data without touching the public internet, ensuring that 'data residency' is a physical reality rather than a contractual promise.

Disconnected sovereign cloud architectures address exactly that problem. The concept is not new, but its practical accessibility for organisations outside defence and intelligence is changing. Microsoft recently announced expanded capabilities for Azure Local that allow large AI models to run in environments that are physically and logically isolated from the public internet. That is vendor news, and this article is not a review of that product. We have not tested it. But the announcement is useful as a prompt to think carefully about what disconnected sovereign infrastructure could mean for UK law firms and other regulated entities handling genuinely sensitive data.

The underlying idea deserves scrutiny on its own terms.

What "Disconnected" Actually Means

A disconnected cloud is not simply an on-premises server. It is a full cloud-like environment, with orchestration, storage, compute, and increasingly AI inference capability, that operates without reliance on external internet connectivity. Data processed within it does not egress to vendor back-ends, telemetry endpoints, or model-training pipelines. The environment can be physically isolated, air-gapped in the traditional sense, or logically isolated through strict network controls.

The critical development is that AI models can now be deployed and run within these environments. Until recently, accessing frontier AI capability meant calling external APIs, which meant data leaving your controlled perimeter. The shift to locally-deployed, inference-capable models changes that calculus. IBM and Informatica have both discussed AI-driven data governance in federated and fragmented environments as an industry trend for asset-intensive sectors. The logic applies equally to legal practice.

For law firms, the relevant question is not whether the technology exists. It does. The question is whether the threat model justifies the infrastructure investment and operational complexity.

The UK Legal and Regulatory Case

UK GDPR Article 5(2) requires data controllers to be able to demonstrate compliance with the data protection principles. That accountability obligation is not satisfied by a vendor's contractual assurances. It requires actual control over how personal data is processed, where it goes, and who can access it. In a standard public cloud AI deployment, that control is partial at best.

Disconnected sovereign infrastructure changes the accountability picture. If the model runs on hardware you control, in a location you specify, without external dependencies, the audit trail is yours. Demonstrating lawful processing becomes considerably more straightforward.

Sector-specific obligations reinforce this. The FCA's operational resilience framework requires firms to identify and protect important business services and to ensure continuity under stress scenarios. A firm whose AI-assisted document review workflow depends on an external API is exposed if that API becomes unavailable, whether through outage, geopolitical disruption, or the vendor's commercial decision to change terms. A disconnected deployment removes that dependency entirely.

The geopolitical dimension matters too. UK-regulated entities in critical national infrastructure, defence supply chains, and parts of financial services face increasing pressure to minimise reliance on US-headquartered technology providers, not because of any specific prohibition, but because of the legal exposure created by the US Cloud Act and analogous instruments. Data held by a US company on infrastructure anywhere in the world can be compelled by US federal process. Disconnected sovereign architectures, properly implemented, address that exposure at the infrastructure level rather than relying on contractual clauses that may not be enforceable in practice.

Data residency requirements under UK GDPR, and the specific requirements of frameworks like the NHS Data Security and Protection Toolkit, point in the same direction. If the data never leaves a defined physical and logical perimeter, residency compliance is structural rather than procedural.

Who Actually Needs This

Bluntly: not everyone. A firm doing routine commercial work with no classified clients and no sector-specific data residency obligations probably does not need disconnected sovereign infrastructure. The cost and operational complexity are real. Running AI models locally requires significant compute, specialist expertise to maintain, and careful version management as models evolve.

But there is a meaningful subset of legal practice where the threat model is different. Firms advising on national security transactions, government procurement, defence matters, or sensitive personal data at scale are in a different position. So are in-house legal teams in regulated industries where the underlying business data is subject to its own sectoral controls.

For those firms, the question should not be "is this worth it?" but "what is our current exposure, and do we understand it?" Many firms using standard cloud AI tools have not conducted a thorough analysis of what data is being processed, under what terms, with what audit capability, and with what resilience against external disruption. That analysis should happen before selecting any infrastructure model.

The practical test is straightforward. Take your most sensitive active matter. Map every point at which AI tools touch that data. For each touchpoint, identify where processing occurs, under whose terms, and what your rights are if something goes wrong. If that exercise reveals gaps, disconnected sovereign architecture is one solution worth evaluating. It is not the only one, and it carries its own risks, including the risk that isolated environments become stale, under-maintained, or operationally burdensome.

What to Do Before Committing to Anything

Before engaging any vendor, including the one whose announcement prompted this article, a firm should do three things.

First, classify your data properly. Not all client data requires the same protection level. A tiered approach lets you apply expensive, operationally complex infrastructure only where it is genuinely warranted.

Second, review your existing cloud AI contracts. Most standard agreements give vendors significant latitude over how your data is processed and retained. If you are using AI tools under default terms, you almost certainly have less control than you think.

Third, take independent technical advice. The marketing around sovereign cloud is thick. The actual security properties of any given deployment depend on implementation detail that no vendor blog post will describe accurately. Claims of air-gap or physical isolation warrant verification against independently audited technical specifications.

The concept of running powerful AI in a controlled, isolated environment is sound. Whether any specific product delivers on that concept is a different question. That warrants independent evaluation before any firm stakes its client confidentiality obligations on the answer.

If you are reassessing how your firm handles AI and sensitive data, the starting point is understanding your current position, not selecting new infrastructure. That is the work worth doing first.

Sources

- 1Why AI is the backbone of data governance in asset-intensive industries

- 2AI Governance: Best Practices and Importance

- 3Microsoft Sovereign Cloud adds governance, productivity and support for large AI models securely running even when completely disconnected

- 4Why data governance must evolve for the AI era

- 5What is cloud data governance?

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

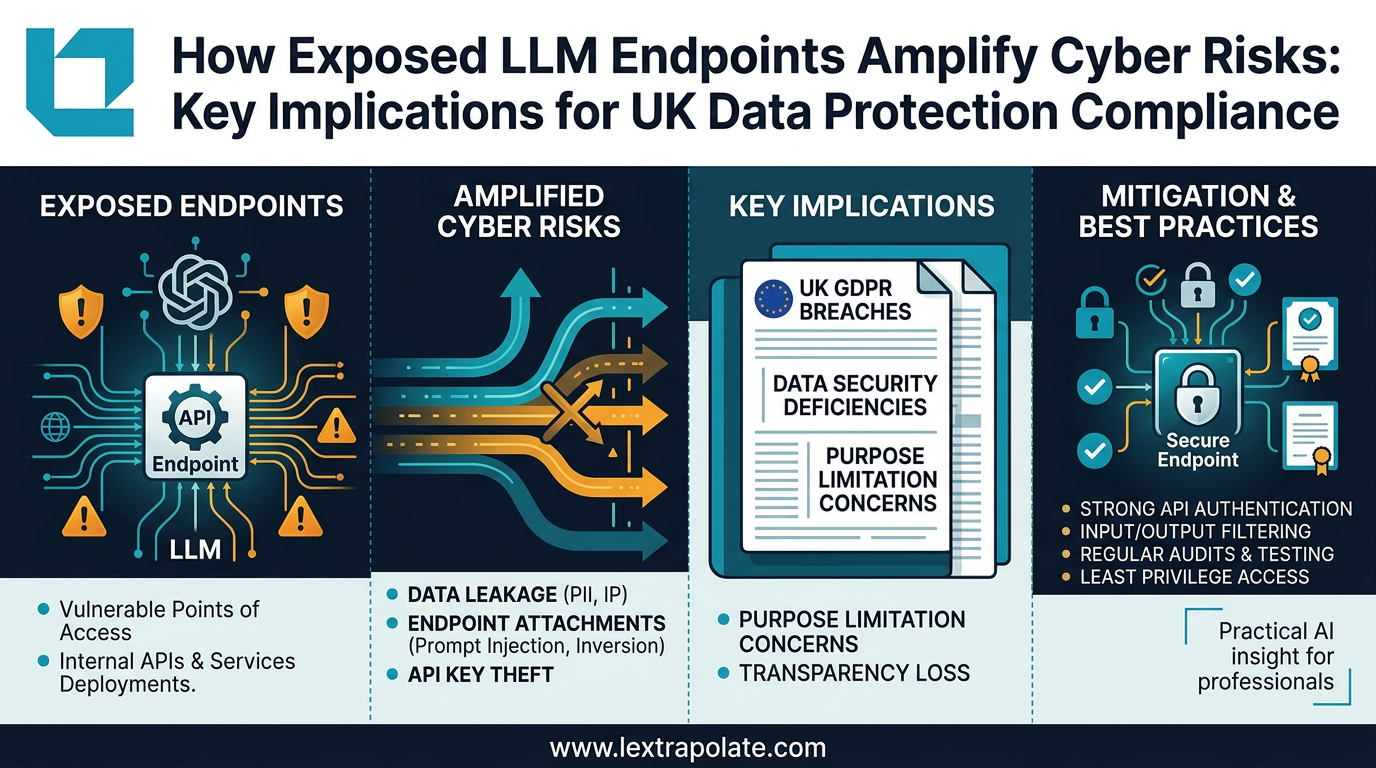

What If Your Law Firm's AI Pipeline Became the Breach? The Infrastructure Risk Nobody Is Talking About

As lawyers build internal LLM tools, the real security risk isn't the AI model. It's the APIs, endpoints and pipelines connecting it to everything else.

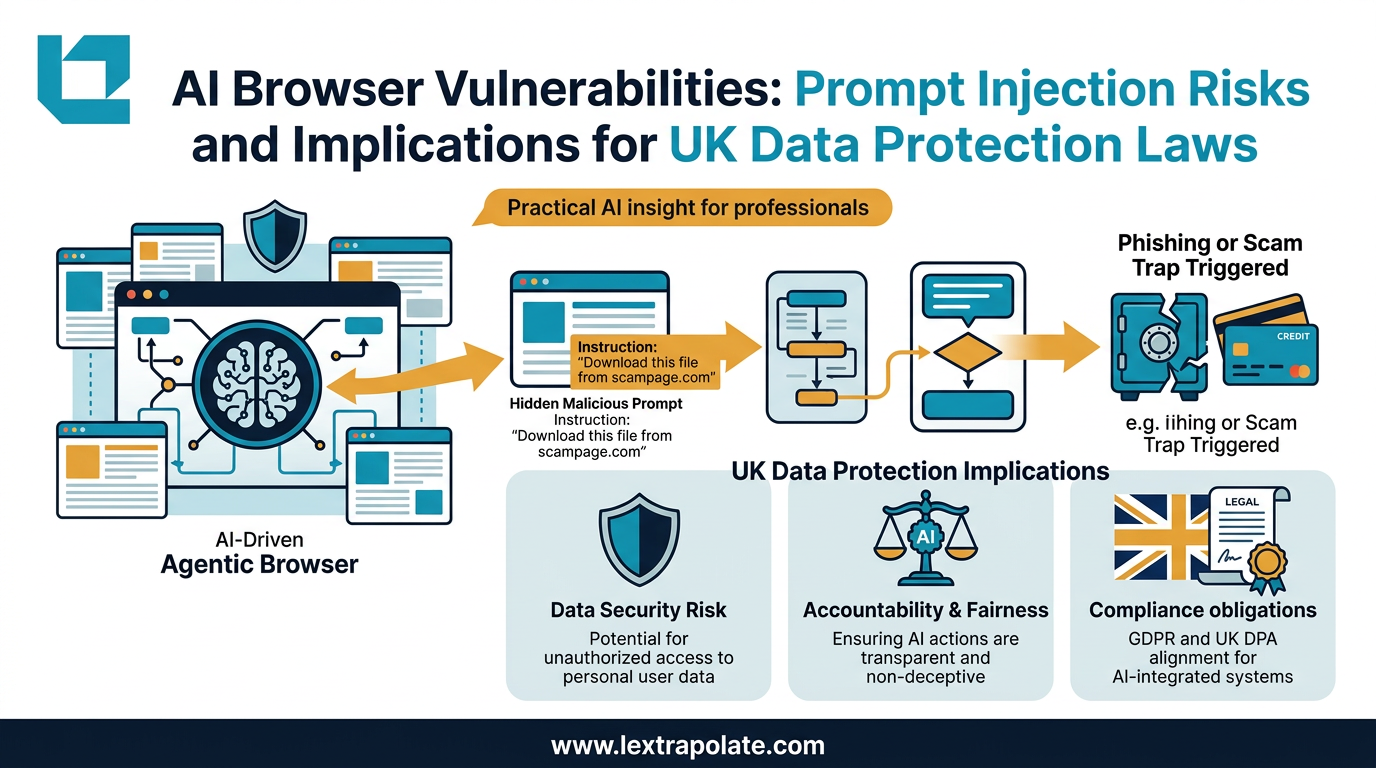

Agentic AI Browsers and Prompt Injection: What Legal Professionals Need to Know

AI browsers that act autonomously on your behalf can be hijacked without a single click. Here is what that means for law firms and their data.

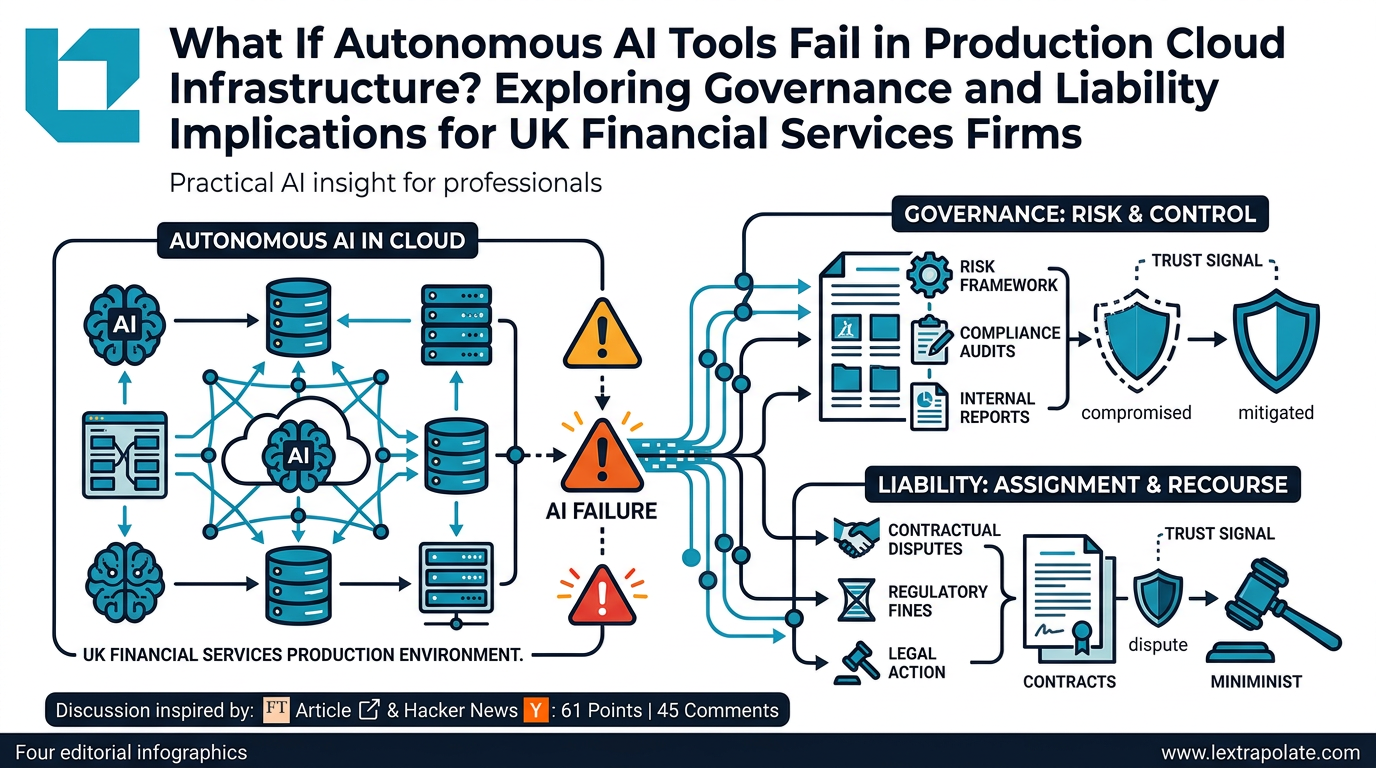

What Happens If Autonomous AI Tools Fail in Production Cloud Infrastructure? Exploring Governance and Liability Implications for UK Financial Services Firms

An AWS outage linked to an autonomous AI coding tool raises urgent questions for UK-regulated firms about oversight, liability, and operational resilience.