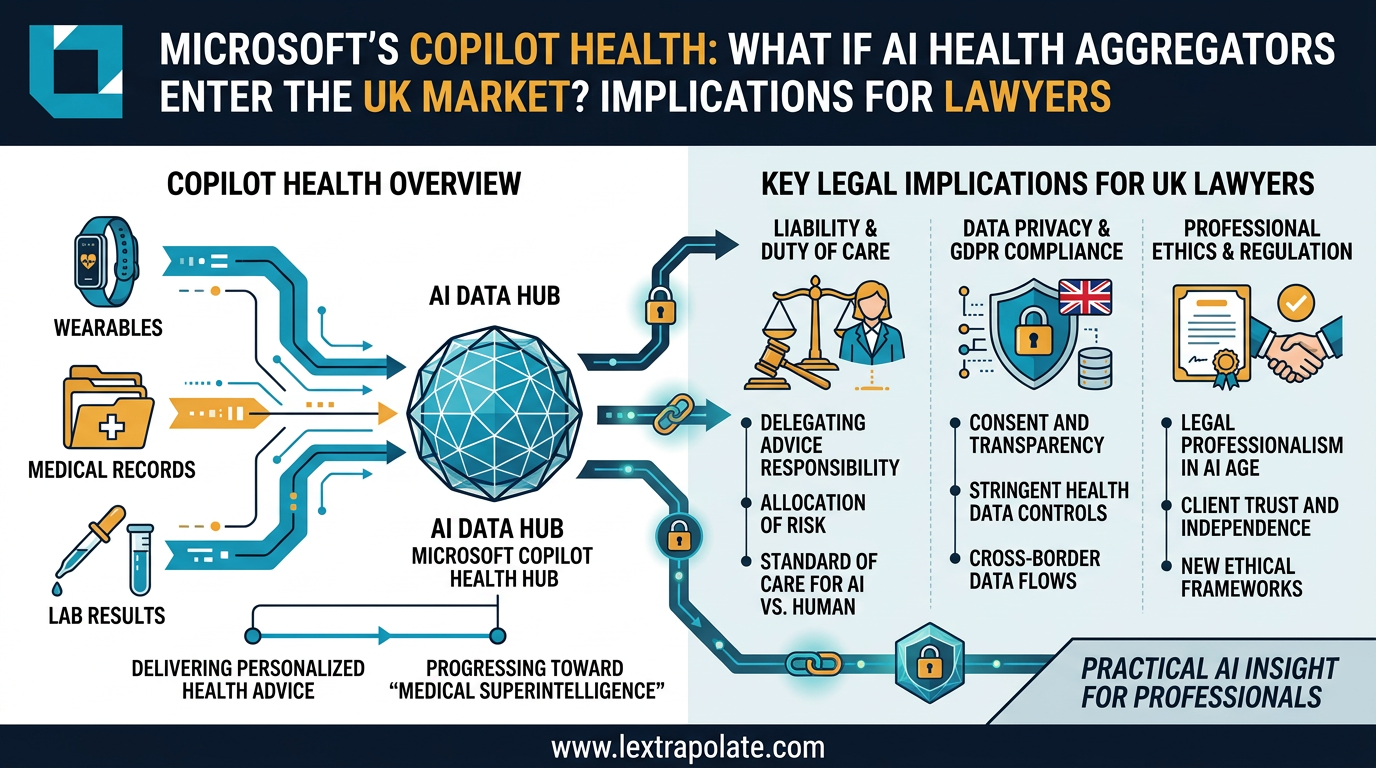

When AI Knows Your Health Better Than Your GP: What Multi-Modal Data Aggregation Means for Lawyers

On 12 March 2026, Microsoft unveiled a system designed to achieve 'medical superintelligence' by synthesising blood work, fitness trackers, and GP notes into a single clinical picture. For UK lawyers, the significance lies not in the healthcare application, but in the underlying multi-modal architecture that is now migrating toward complex legal document analysis.

That capability now exists in prototype form. And the interesting question for lawyers is not what it means for healthcare. It is what happens when the same architecture arrives in legal practice.

What Multi-Modal Data Aggregation Actually Is

Microsoft announced Copilot Health on 12 March 2026, as a US early-access product. The product integrates wearable data from sources including Apple Health, Fitbit, and Oura, electronic health records from more than 50,000 US providers via HealthEx, and laboratory results, synthesising all of it to answer health queries and help users prepare for clinical appointments. Microsoft handles around 50 million daily health queries through its general Copilot product already.

The ambition, expressed by Microsoft executives including Dr. Dominic King, is explicit: "medical superintelligence." We have not tested Copilot Health ourselves, and all current coverage is announcement-based rather than independent evaluation. The clinical team numbers over 230 physicians, and Microsoft cites ISO/IEC 42001 certification, but independent clinical evaluation of advanced features has not yet occurred.

Set aside the marketing language. The underlying engineering concept is what matters. This is an AI system designed to pull structured and unstructured data from multiple heterogeneous sources, each with its own format and provenance, and to reason across all of them simultaneously to produce a coherent, actionable output. That is not a chatbot. That is an AI agent built for complex, multi-source synthesis.

Legal practice runs on exactly this kind of problem.

Why This Architecture Matters for Legal Document Analysis

A large commercial transaction generates hundreds of documents. Due diligence bundles. Board minutes. Disclosure letters. Warranties. Side letters. Ancillary agreements. Each document has its own internal logic. The real analytical work is finding the connections: the warranty that is qualified by a disclosure that references a document in a data room that itself contradicts a board resolution dated three weeks earlier.

Senior lawyers do this by reading everything and holding it all in working memory simultaneously. Junior lawyers do it in teams with spreadsheets and checklists. It is slow, expensive, and error-prone.

The capability being demonstrated in the health context, AI that ingests multi-modal, multi-source data and reasons across it in real time, is the same capability that would be transformative in legal document analysis. The legal profession has had narrow AI tools for document review for years. What is different here is the synthesis layer: the AI not merely flagging a clause but understanding how that clause interacts with three other documents it has already read.

Several legal technology providers are working on this. None has yet demonstrated it at the level of sophistication being claimed, even provisionally, in the health context. That gap will not persist indefinitely.

UK Regulatory Exposure When This Crosses the Atlantic

Copilot Health is a US product, for now. If products of this type enter the UK market, the regulatory picture becomes considerably more complex than it is in the US.

Health data is special category data under the UK GDPR and the Data Protection Act 2018. Processing it requires an Article 9 basis, and in practice that means explicit consent or specific statutory grounds. An AI system aggregating wearable data, NHS records, and laboratory results would face immediate scrutiny from the ICO, particularly around automated decision-making under Article 22. If outputs are framed as advice rather than information, the MHRA's Software as a Medical Device framework becomes relevant, and obtaining a UKCA mark for an AI product that claims to interpret diagnostic data is not straightforward.

For the legal equivalent, the same structural issue arises. An AI agent that synthesises legal documents and generates advice-level output touches the reserved legal activities framework under the Legal Services Act 2007. If the synthesis produces something that looks like legal advice, and a client acts on it, the question of who is liable does not resolve itself by pointing at the software.

Law firms adopting multi-modal AI document analysis tools will need to think carefully about where the tool ends and professional judgement begins. That boundary has to be real, not cosmetic. The Solicitors Regulation Authority has been clear that professional responsibility is not delegable to a software vendor. Barristers remain personally responsible for the work product they sign off on.

The Monday Morning Test

If you are a solicitor in a transactions team or a barrister reviewing disclosure, what should you actually do with this?

First, watch the health AI sector closely. Not because it affects your clients directly, yet, but because it is the test bed for multi-source AI synthesis. The technical problems being solved there, provenance tracking, conflicting data resolution, confidence scoring, audit trails, are identical to the problems that legal AI synthesis tools will need to solve.

Second, when vendors approach you with claims about AI that "reads your entire data room and identifies risk," ask how it handles conflicting provisions across documents. Ask what it does when two sources give inconsistent information. Ask for the audit trail. The health AI products are being built with 230 physicians and ISO certification behind them. Legal AI products should face the same level of scrutiny before you rely on their output in advice to a client.

Third, get your data governance in order now. If your firm is considering any AI tool that ingests client documents at scale, the legal basis for processing that data, the retention policy, and the security architecture need to be established before deployment, not after the first client complaint.

The Capability Is Coming. The Readiness Is Not.

The technology being trialled in healthcare is not being developed for healthcare alone. The companies building it are platform businesses. Microsoft, OpenAI, Amazon, and Anthropic are all moving into health AI simultaneously because health data is the most complex, heterogeneous, high-stakes data environment available. Solving synthesis at that level of difficulty produces a capability that transfers directly to other professional domains.

Legal data is not as complex as clinical data. That means legal applications of this architecture will arrive sooner, and will work better out of the box, than the health versions.

The firms that understand what multi-modal AI synthesis actually is, not the marketing version but the engineering reality, will be positioned to adopt it intelligently when it matures. The firms that wait to understand it until a vendor is sitting across the table from them will be negotiating from a position of ignorance.

That is always a bad position for a lawyer to occupy.

Lextrapolate monitors developments in AI capability and deployment for the legal sector. If your firm is assessing AI tools for document analysis or due diligence workflows, contact us before you sign anything.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

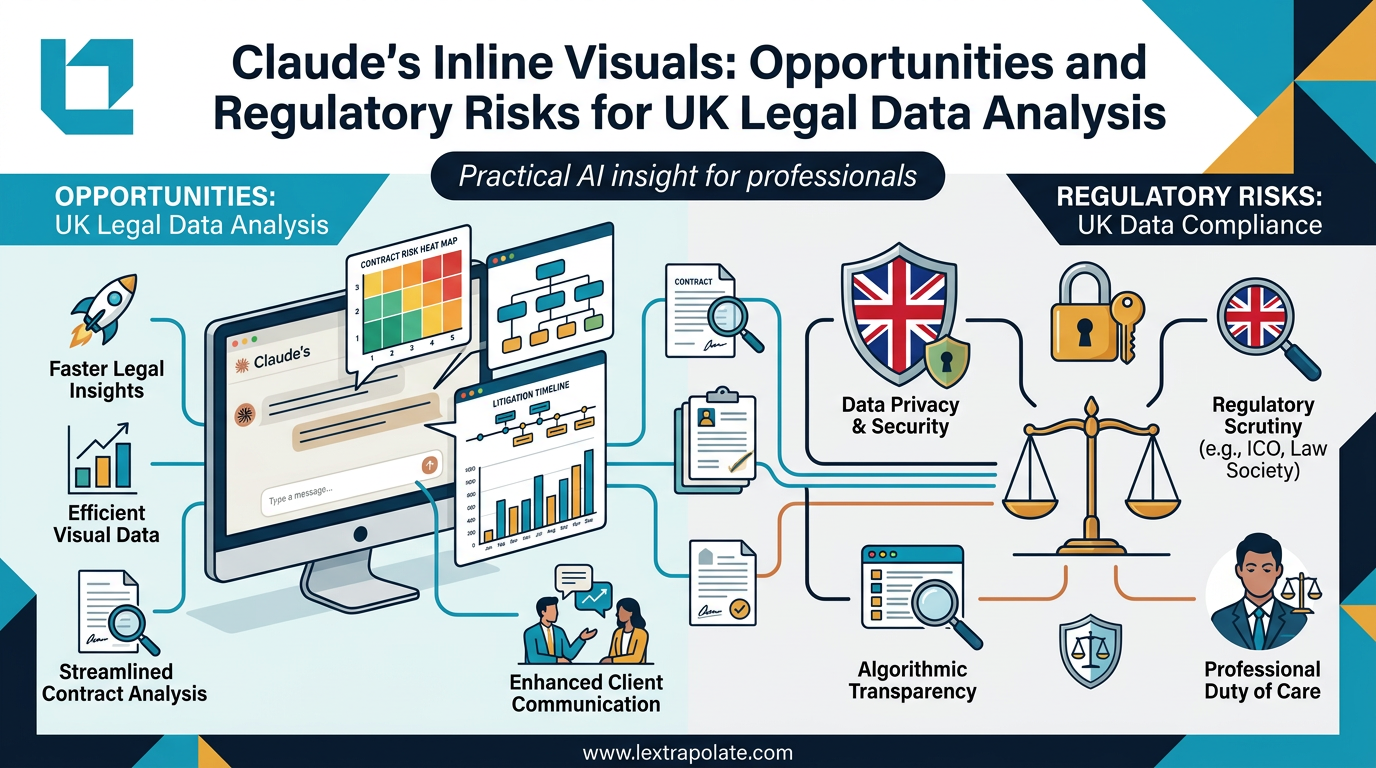

AI-Generated Visuals in Legal Work: Useful Shortcut or Regulatory Trap?

AI can now turn data into interactive charts mid-conversation. For lawyers, that's useful. It also raises questions about transparency, data protection, and professional responsibility.

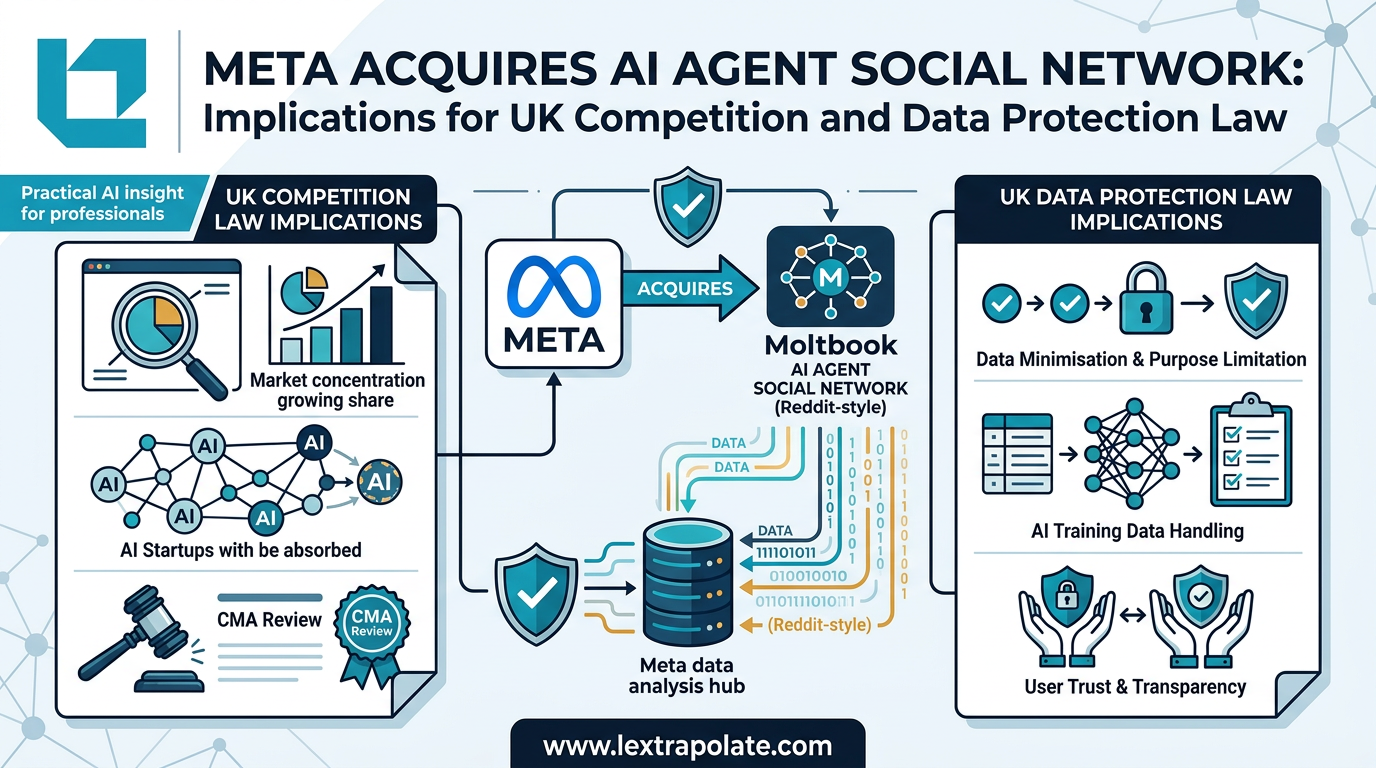

AI Agents Talking to Each Other: What It Means When Social Networks Go Autonomous

Meta has acquired a platform built for AI-to-AI communication. The regulatory and practical implications for UK professionals are worth examining carefully.

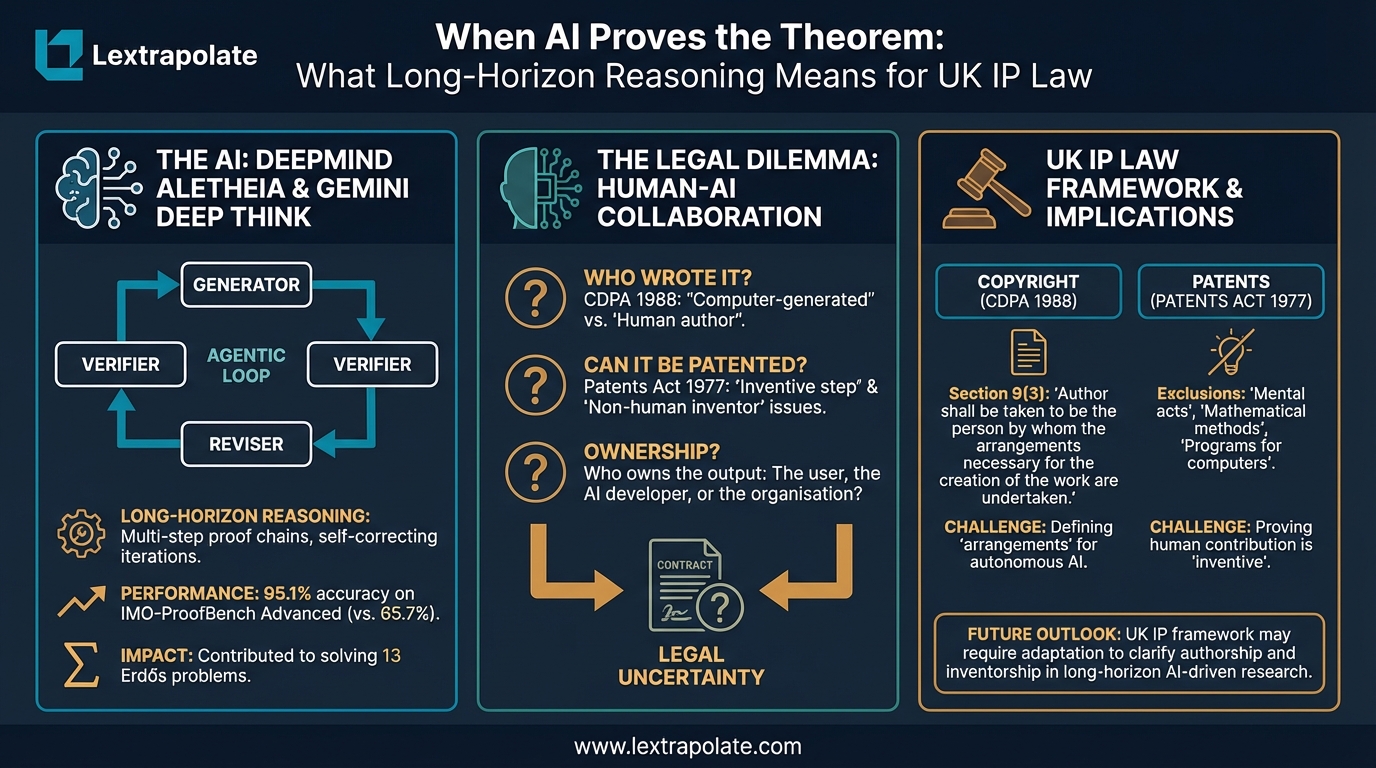

When AI Proves the Theorem: What Long-Horizon Reasoning Means for UK IP Law

AI systems can now generate and verify complex mathematical proofs autonomously. UK copyright and patent law is not ready for what that means.