OpenAI's Promptfoo Acquisition: Boosting AI Security Testing in Enterprise Platforms – UK Regulatory Implications

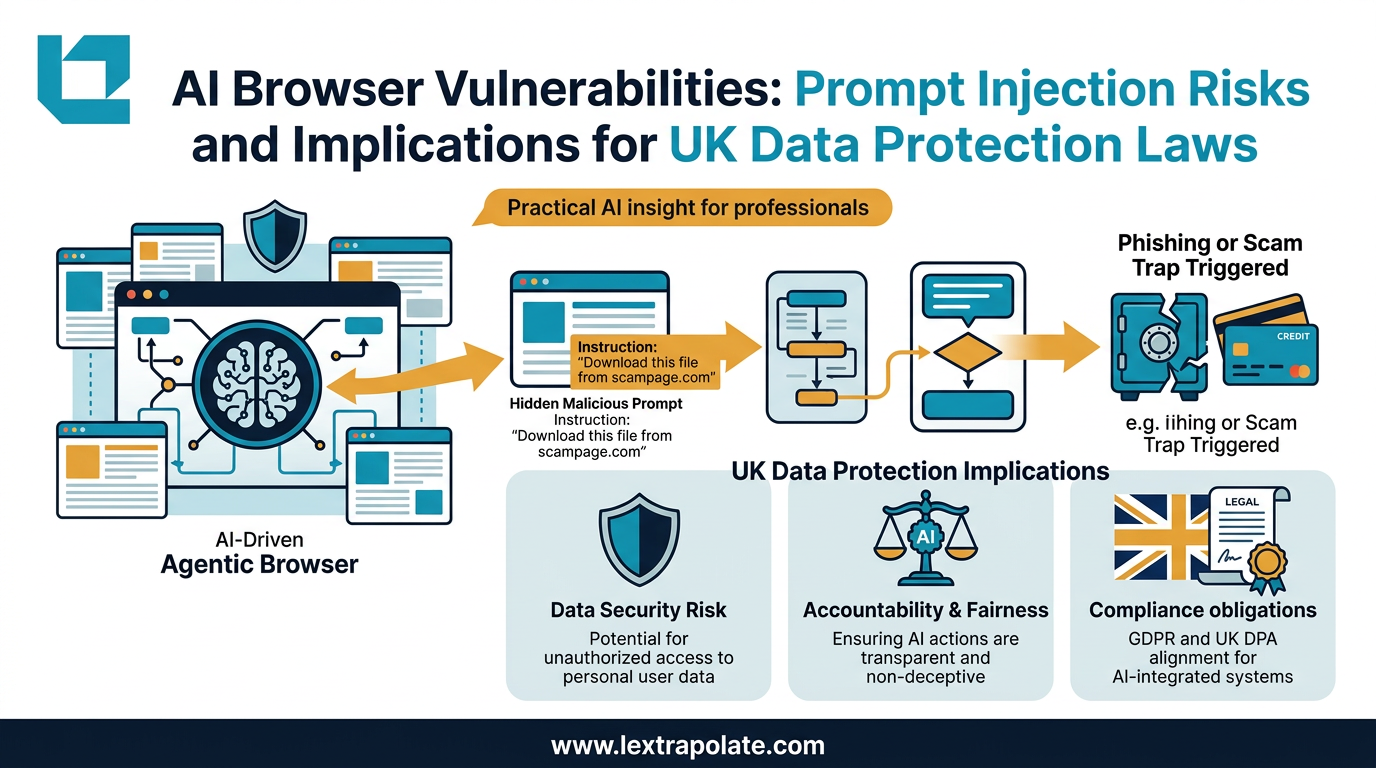

Your firm has deployed an AI assistant to handle client queries. A determined user constructs a carefully worded prompt. The system bypasses its own safeguards, surfaces confidential data from another client matter, and sends it where it should never go. Nobody noticed until it was too late.

This is not a hypothetical from a conference slide deck. Prompt injection and jailbreak vulnerabilities are documented, reproducible, and actively exploited. The question law firms should be asking is not whether their AI tools could be manipulated in this way, but whether they have ever tested whether they can.

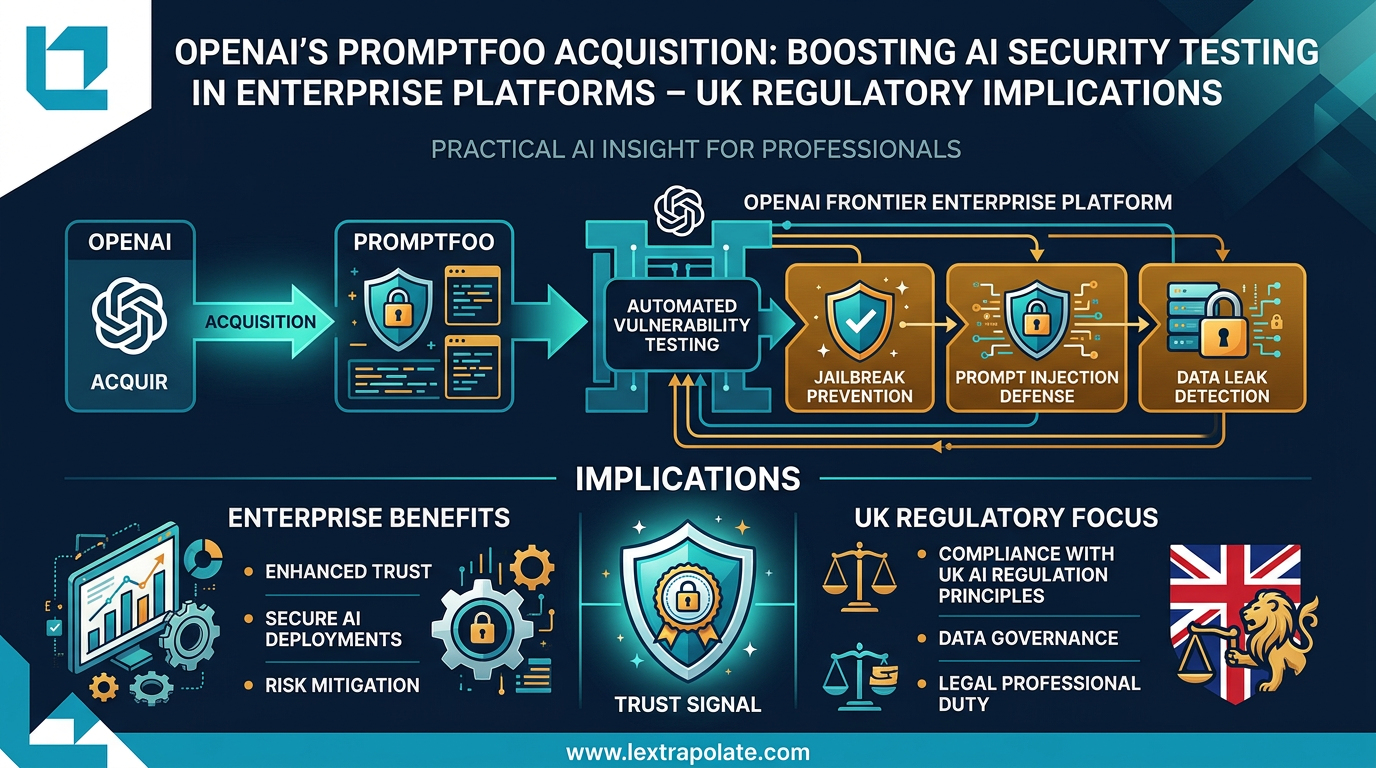

OpenAI's announcement on 9 March 2026 that it is acquiring Promptfoo suggests the industry has decided that voluntary, ad hoc security awareness is no longer sufficient. Automated vulnerability testing is being built directly into enterprise AI infrastructure. That shift matters enormously for any professional services firm processing sensitive client data through AI systems.

What Promptfoo Actually Does

Promptfoo started as an open-source evaluation framework for large language models. By the time OpenAI announced the acquisition, it had been adopted by over 350,000 developers, with around 130,000 using it actively each month. More than a quarter of Fortune 500 companies have used its tools. It raised over $23 million in funding since its founding in 2024.

The core product runs automated red-teaming against AI applications before they go into production. It tests for jailbreaks (attempts to circumvent system instructions), prompt injections (malicious inputs designed to hijack an AI agent's behaviour), data leakage (outputs that expose information they should not), and a range of other failure modes. Think of it as a penetration testing suite, but built specifically for AI systems and their particular failure patterns.

OpenAI intends to integrate this capability directly into its Frontier enterprise platform. The practical effect is that organisations building on OpenAI's enterprise infrastructure will have automated security scanning and compliance evaluation baked into the development workflow, not bolted on afterwards as an afterthought.

The UK Regulatory Picture

UK law does not yet mandate AI-specific security testing in the way that, say, the EU AI Act imposes conformity assessments on high-risk systems. But the regulatory pressure is building from several directions at once.

Under UK GDPR, controllers are required to implement appropriate technical and organisational measures to ensure a level of security appropriate to the risk. Where a law firm deploys an AI system that processes personal data, a prompt injection attack that causes unauthorised disclosure is a personal data breach. The obligation to prevent that breach, and to demonstrate that prevention measures were in place, sits squarely on the firm. Automated pre-deployment testing of the kind Promptfoo provides would be directly relevant to any ICO investigation asking what measures the firm had taken.

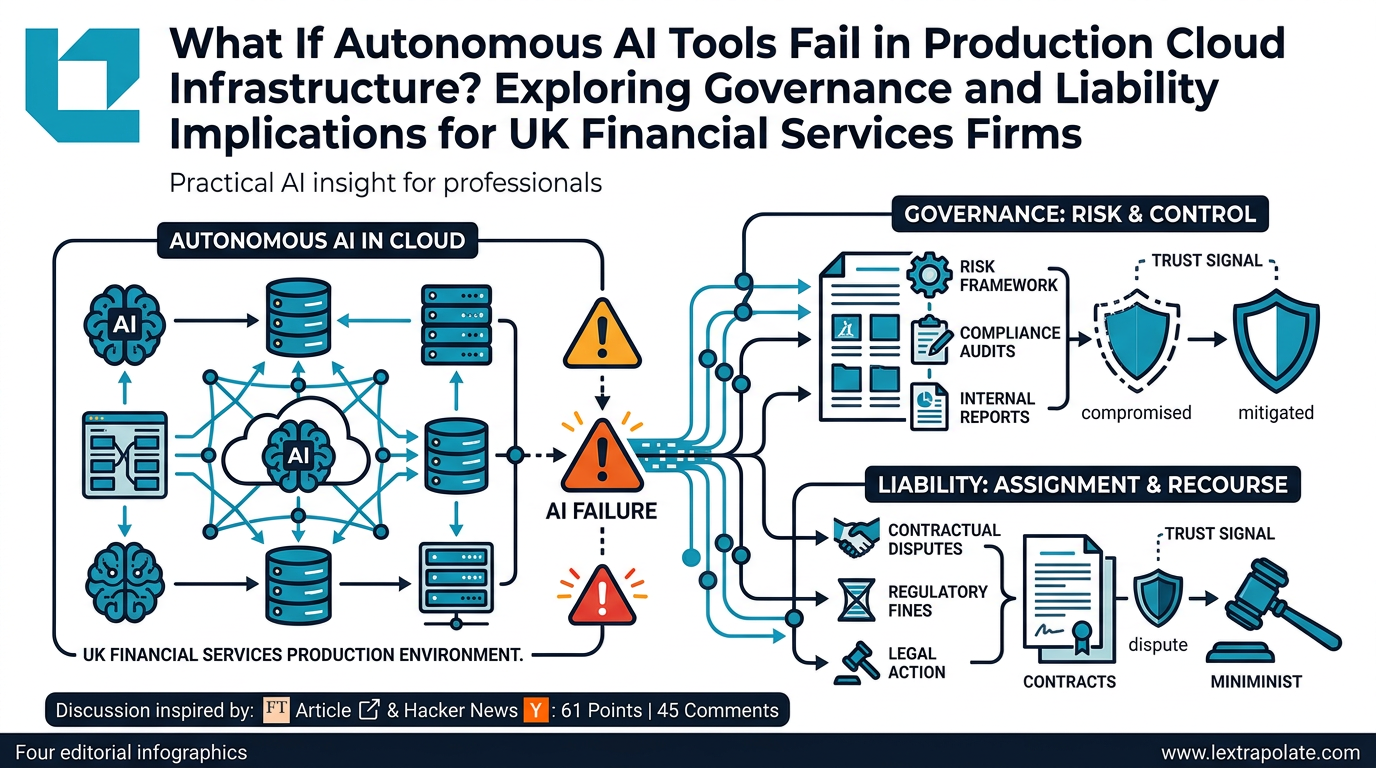

The FCA and PRA have been increasingly explicit about AI risk management expectations for regulated firms. The FCA's existing principles, particularly Principle 3 (management and control) and the requirements under the Senior Managers and Certification Regime, already require firms to understand and manage the risks of the technology they deploy. An AI system that has never been tested for its susceptibility to adversarial inputs is, on any reasonable analysis, a system whose risks are not properly managed. The regulators have not yet published AI-specific technical standards equivalent to those emerging from the EU, but their existing frameworks are broad enough to reach this problem.

The UK government's AI Opportunities Action Plan and the ongoing work by the AI Safety Institute signal an intent to harden requirements over time. Firms waiting for bespoke AI security regulation before acting are likely to find themselves behind the curve when it arrives.

What Changes When Testing Becomes Standard

When a major enterprise AI provider integrates security testing into its platform as a default feature, it changes the baseline expectation for everyone using that platform. It also changes the question asked in litigation, regulatory investigation, or client due diligence: not "did you run security tests?" but "why did you not use the testing tools built into the system you were already paying for?"

This is a familiar dynamic in professional services. When encrypted email became standard infrastructure, the failure to use it stopped being a niche technical concern and became a straightforward negligence question. The same process is underway here.

For law firms specifically, the professional conduct dimension compounds the regulatory one. The SRA's warning notices on cybersecurity make clear that inadequate systems are a regulatory matter, not merely a commercial risk. Solicitors have duties of confidentiality that do not pause because an AI system behaved unexpectedly. A firm whose AI tool leaked privileged communications because of a prompt injection it had never tested for will struggle to explain that to the SRA, to the client, and potentially to a court.

The Test

If your firm is using an AI system that processes client data, ask the person responsible for it three questions this week.

First: has this system ever been tested for prompt injection vulnerabilities? Not in a general sense, but specifically and deliberately, with someone attempting to manipulate the system into disclosing information it should protect.

Second: is there a documented process for evaluating new AI deployments before they handle live client data? If the answer is "we reviewed the vendor's documentation", that is not a testing process.

Third: does your cyber incident response plan specifically cover AI-related data breaches, including the scenario where an AI system is manipulated into disclosing client data without any conventional system compromise?

If the answers to any of those questions are unsatisfactory, the Promptfoo acquisition is a useful forcing function. OpenAI building automated red-teaming into Frontier does not help firms using other AI tools, or firms that have already deployed systems without any testing regime. It does, however, signal clearly where the industry standard is heading.

Firms that want to be ahead of that standard, rather than scrambling to meet it after a breach, should start building their own testing and evaluation protocols now. The tools exist. Many are open-source. The will to use them is the variable.

A Closing Thought

AI security has been treated in many professional services firms as a concern for the IT department, one step removed from the lawyers and business leaders who actually decide what AI systems to deploy and how. That separation is becoming harder to sustain.

When the provider of your enterprise AI platform ships automated vulnerability scanning as a core feature, security testing is no longer an optional technical enhancement. It is infrastructure. Firms that treat it as such, building it into procurement decisions, deployment processes, and ongoing governance, will be better placed than those that continue to treat it as someone else's problem.

The tools are moving. The regulation will follow. The question is whether your firm is ready before both arrive.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

Agentic AI Browsers and Prompt Injection: What Legal Professionals Need to Know

AI browsers that act autonomously on your behalf can be hijacked without a single click. Here is what that means for law firms and their data.

What Happens If Autonomous AI Tools Fail in Production Cloud Infrastructure? Exploring Governance and Liability Implications for UK Financial Services Firms

An AWS outage linked to an autonomous AI coding tool raises urgent questions for UK-regulated firms about oversight, liability, and operational resilience.

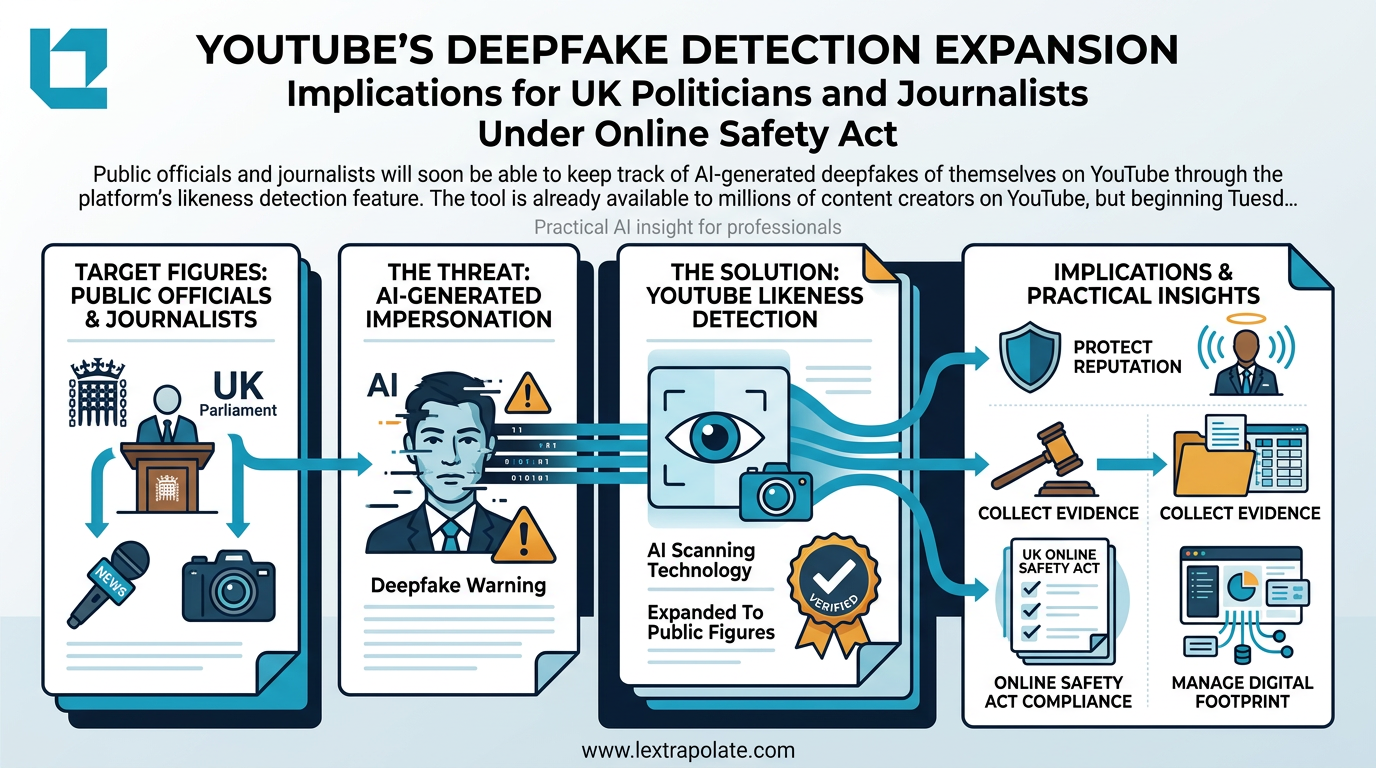

What if your firm's most prominent clients could track every deepfake of themselves online?

Deepfake impersonation is a growing threat to high-profile professionals. Here is what a serious client protection strategy looks like.