Seeing Is No Longer Believing: What the Der Spiegel AI Image Scandal Means for UK Professionals

Last week, Der Spiegel confirmed it published AI-generated propaganda images in its Iran coverage, proving that even elite editorial teams can no longer distinguish between a photograph and a Flux 2 hallucination. For UK legal professionals, this failure marks the end of visual evidence as a self-authenticating medium and the beginning of a new era of forensic liability.

Last week, Der Spiegel confirmed it had removed multiple images from its Iran coverage after forensic analysis concluded they were most likely AI-generated or AI-altered. The images came via SalamPix, distributed through the French agency Abaca Press, and had already spread to other major German outlets including Die Zeit and Süddeutsche Zeitung. Dutch agency ANP had earlier blocked over 1,000 images from the same source, triggering the wider investigation. Forensics firm Neuramancer classified three of five examined images as likely AI-generated and one as having been manipulated using Flux 2.

The images were not accidental fakes. They served a purpose: to shape the narrative around Iranian military capability and political authority at a moment of acute geopolitical tension.

That is the point. This was not a deepfake celebrity scandal or an academic demonstration of what AI can do. This was state-adjacent propaganda entering trusted distribution channels, clearing editorial review, and reaching millions of readers. The supply chain failed at every stage.

How Propaganda Gets a Press Pass

Photo agencies operate on trust. Editors at volume-publishing newsrooms cannot individually verify every image they licence. The system assumes that accredited agencies have done the work upstream. SalamPix had not. Or, more precisely, someone upstream in the chain had deliberately exploited that assumption.

AI image generation has reached a fidelity where the artefacts that once flagged a fake, inconsistent shadows, warped fingers, misaligned text, are either absent or subtle enough to survive casual review. Flux 2, the model apparently used to alter at least one of the Spiegel images, is an open-weight model. Anyone can run it. The barrier to producing plausible propaganda imagery is now close to zero.

This is not a German problem. The same distribution infrastructure serves UK newsrooms. Reuters, Getty, AP, and their downstream partners all operate on similar trust-based models. If a bad-faith actor embeds fabricated images into a credible-looking agency feed, UK outlets face exactly the same exposure Der Spiegel encountered.

The UK Legal Exposure

Three distinct legal risks arise for UK publishers who republish AI-fabricated images without adequate verification.

Defamation. Under the Defamation Act 2013, a publication that damages a living person's reputation bears the burden of establishing a defence. An AI-generated image falsely depicting a named individual, say, a politician in a compromising context, could ground a claim. "It came from a reputable agency" is not a defence. The publisher published it. Honest opinion requires the stated fact to be true; justification requires the facts themselves to be accurate. A fabricated image fails both.

The Online Safety Act 2023. Section 35 of the OSA created a new offence of sending false communications with intent to cause non-trivial harm. More directly relevant to platforms, the Act imposes duties on regulated services to manage illegal content, including content designed to manipulate democratic processes or incite civil unrest. AI-fabricated war propaganda sits squarely in that territory. Platforms hosting such material without adequate detection or removal mechanisms face enforcement action from Ofcom.

The Editors' Code of Practice. Clause 1 requires that the press takes care not to publish inaccurate, misleading or distorted information. The code does not offer a safe harbour for agency-sourced material. IPSO has previously found complaints upheld against outlets that published inaccurate content even where the error originated elsewhere. An AI-fabricated image that misrepresents military capability or political events is, on any reading, distorted information.

The combined picture is uncomfortable. UK publishers who treat agency-sourced images as pre-verified assume a risk they have not properly priced.

This Is Not Only a Media Problem

The relevance extends well beyond newsrooms. Consider where visual evidence actually matters in professional practice.

Solicitors use photographs in property disputes, personal injury claims, and planning appeals. Barristers present images in evidence. Insurance adjusters assess claims partly on photographic documentation. Compliance teams verify KYC documentation that increasingly includes photographed identity materials. Due diligence processes in M&A transactions often rely on photographs of assets, premises, or conditions.

AI image generation can plausibly fabricate any of these. A fraudulent personal injury claimant can generate images of an injury. A property seller can alter photographs to conceal defects. A sanctions-evading counterparty can generate images of facilities that do not exist or conceal those that do.

The professional who accepts a photograph at face value in 2026 is taking a risk that did not exist five years ago.

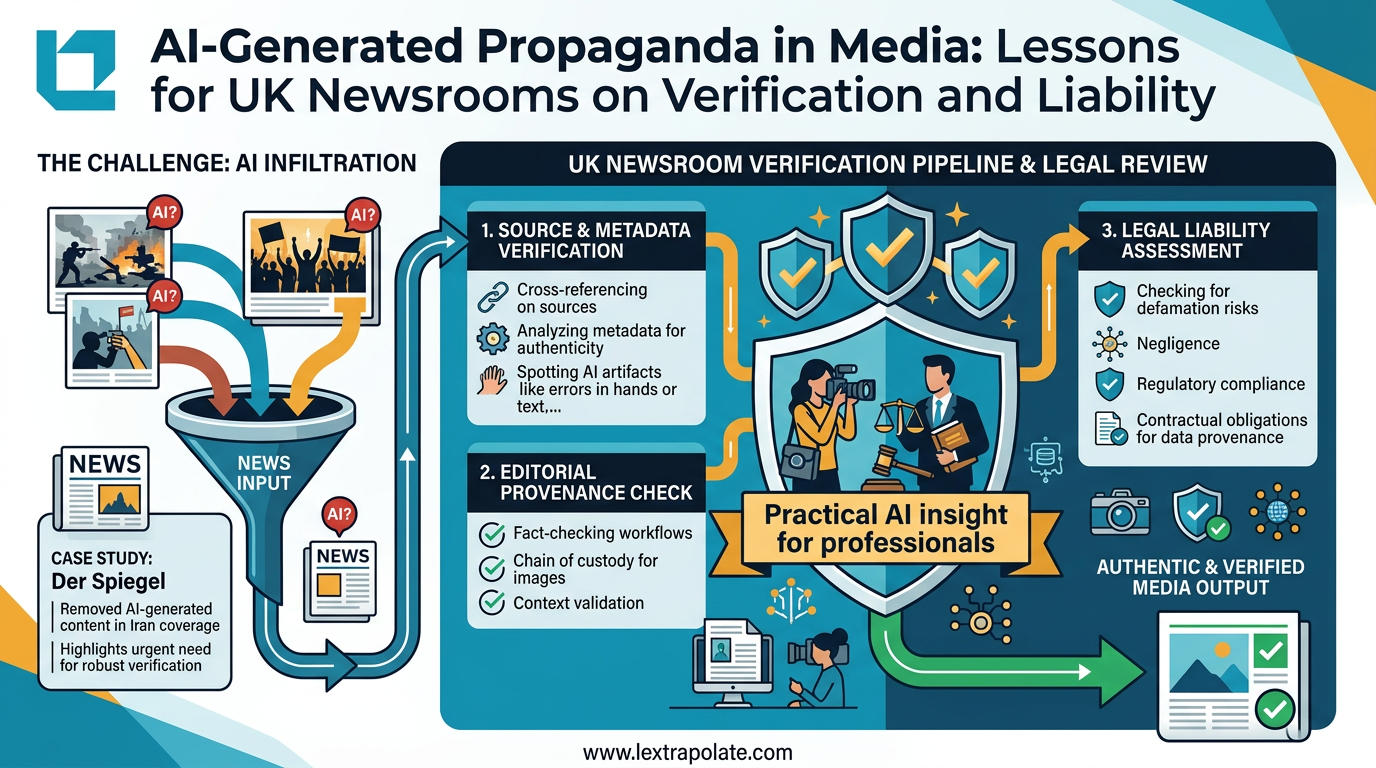

What a Verification Workflow Actually Looks Like

What should you actually do differently?

First, stop treating origin as verification. "It came from [agency/client/counterparty]" tells you about the source, not the authenticity. Those are different questions.

Second, run basic provenance checks on any image that matters. Tools such as Google Reverse Image Search and TinEye can identify whether an image has prior existence. C2PA metadata, where present, provides cryptographic provenance. These checks take under two minutes and catch a material percentage of fabricated images.

Third, for higher-stakes images, commission forensic analysis. Neuramancer is one firm operating in this space; others include Truepic and Hive Moderation. I have not tested any of these independently, and capability claims in this sector warrant scrutiny before procurement. But the category of forensic image analysis is real and maturing.

Fourth, document your verification steps. In litigation, showing that you took reasonable steps to verify visual evidence matters both for professional conduct purposes and for the credibility of your case. A paper trail of verification is worth having.

Fifth, update your supplier contracts. If you licence images from agencies or receive photographs from third parties in a professional context, consider whether your terms now need to address AI generation. A warranty that supplied images are authentic photographs, not AI-generated or AI-altered, costs little to include and shifts risk appropriately.

The Structural Point

Der Spiegel is a serious, well-resourced outlet. Its editorial processes are not casual. That AI-fabricated propaganda cleared those processes is the significant fact here.

Verification systems designed for a world where photographs were expensive and technically demanding to fake are not fit for a world where convincing fabrication takes seconds. That gap is now being exploited.

UK professionals across media, law, insurance, and compliance need to treat visual evidence the way they treat written witness evidence: not as presumptively true, but as something that requires corroboration before reliance.

The Der Spiegel incident is an early public example. It will not be the last.

If your organisation needs to audit its current verification processes for AI-generated content, or wants to understand where the legal risk sits in your specific context, contact Lextrapolate.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

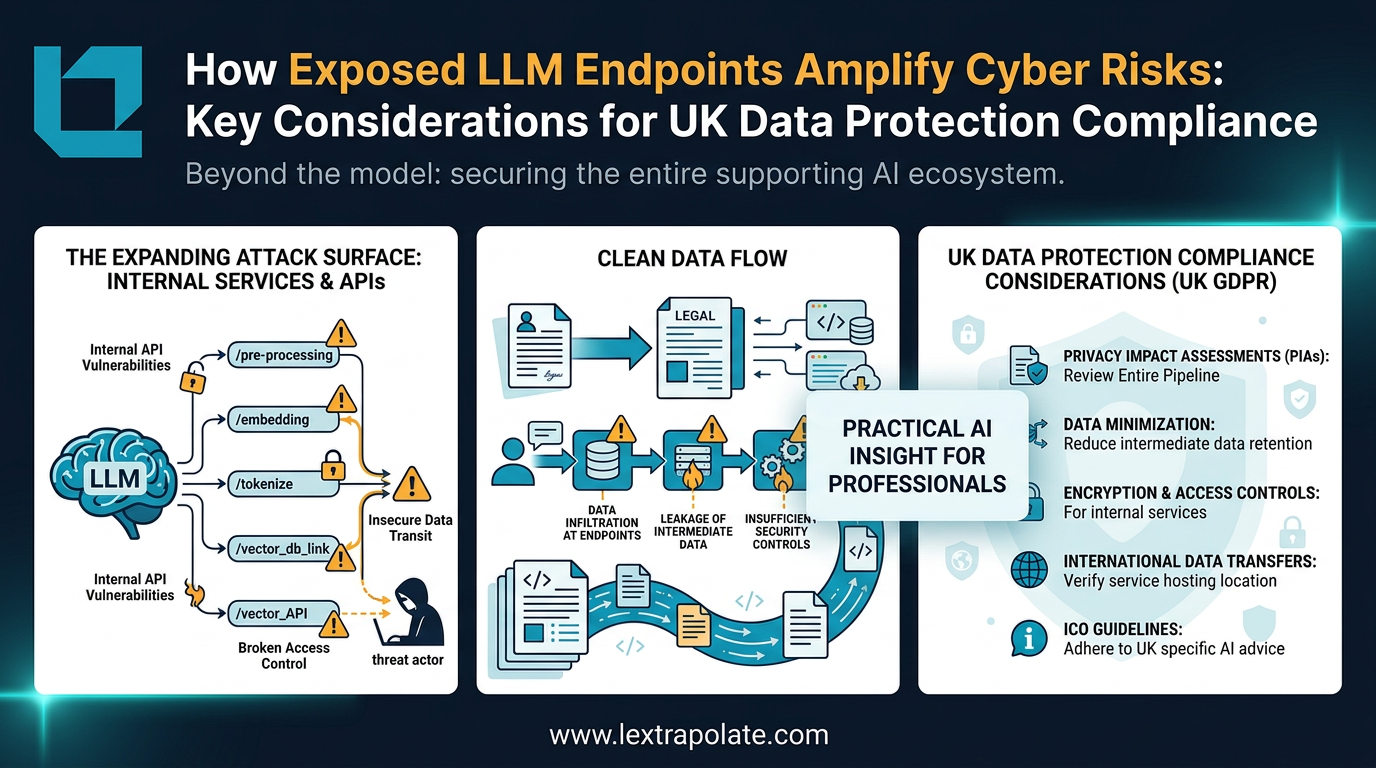

What if your AI workflow was the security breach? The hidden risks of LLM infrastructure

As professionals build custom AI tools and workflows, the real security risk isn't the model. It's the infrastructure connecting everything together.

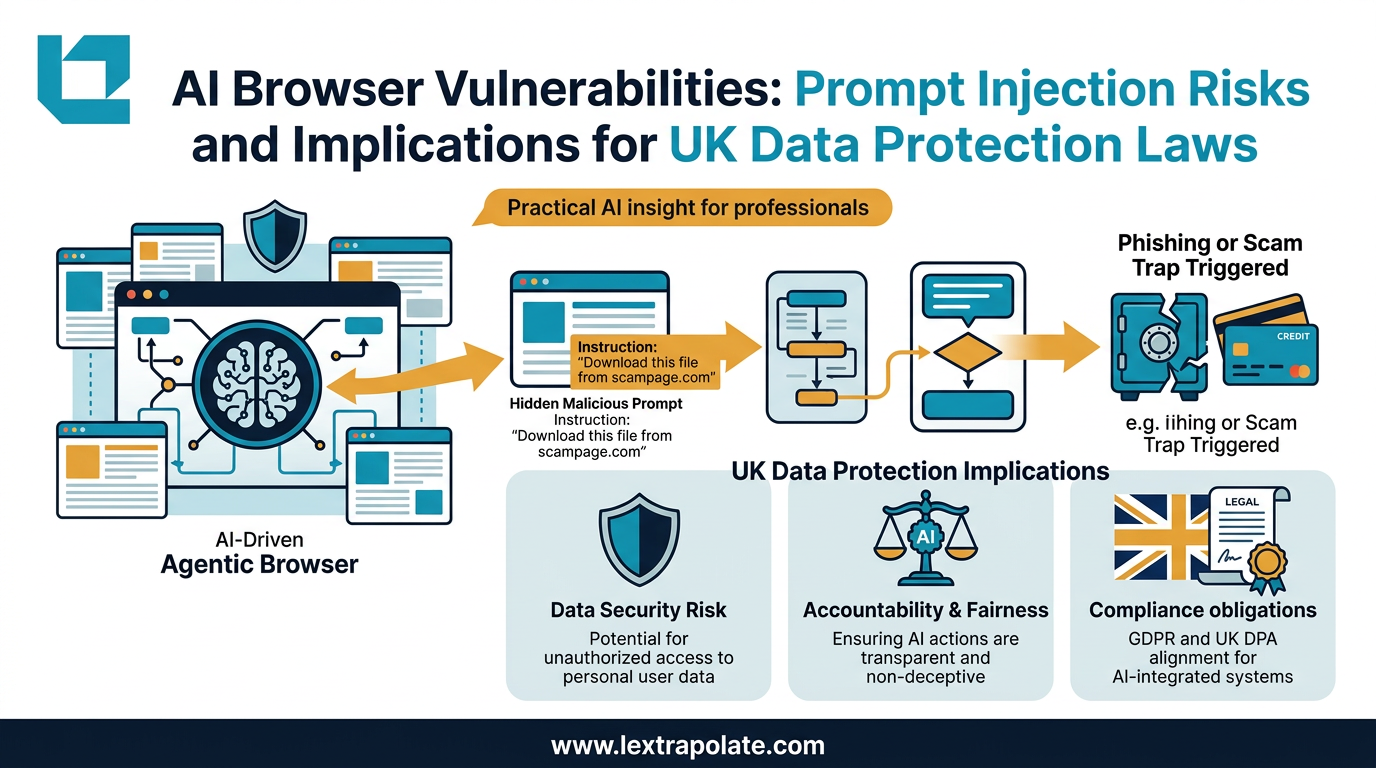

Agentic AI Browsers and Prompt Injection: What Legal Professionals Need to Know

AI browsers that act autonomously on your behalf can be hijacked without a single click. Here is what that means for law firms and their data.

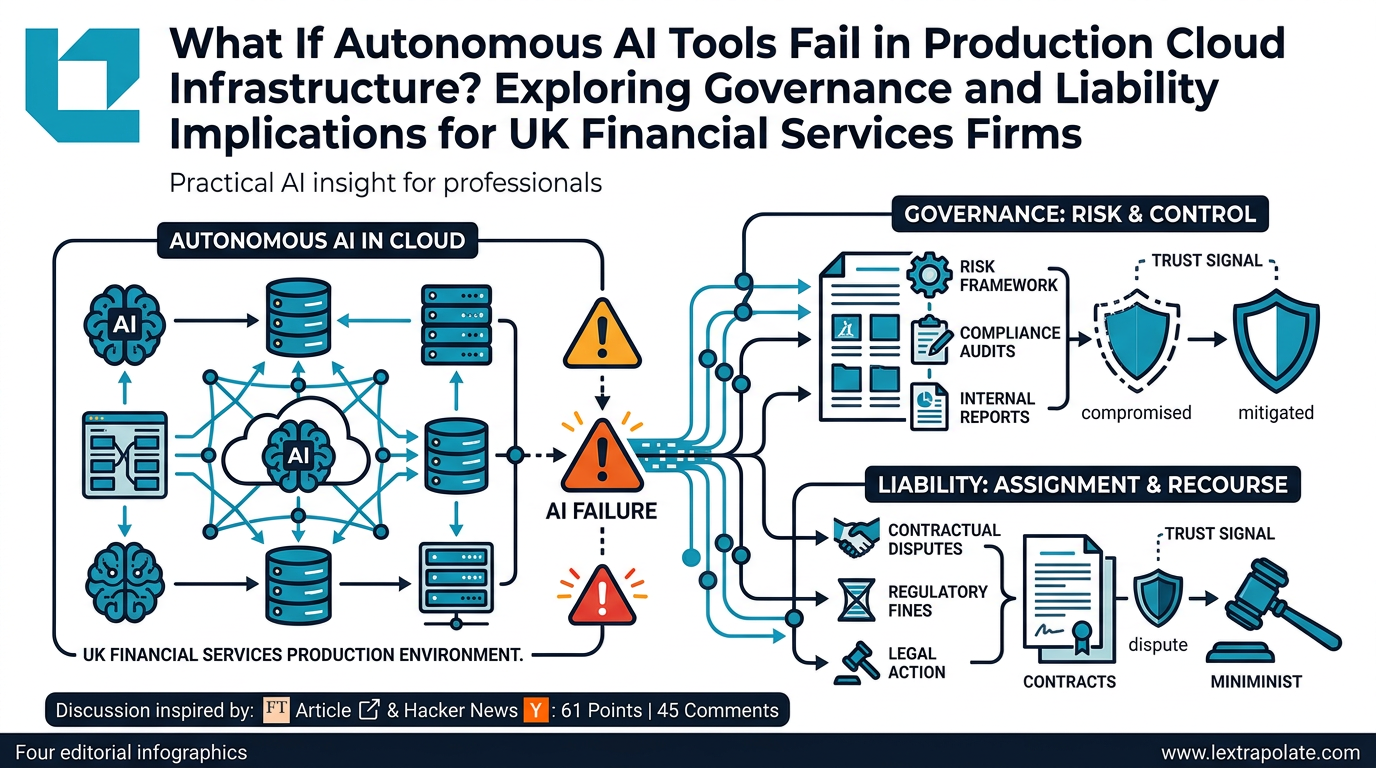

What Happens If Autonomous AI Tools Fail in Production Cloud Infrastructure? Exploring Governance and Liability Implications for UK Financial Services Firms

An AWS outage linked to an autonomous AI coding tool raises urgent questions for UK-regulated firms about oversight, liability, and operational resilience.