What If AI Training Became a Professional Obligation for Lawyers?

Suppose the SRA announced tomorrow that using AI tools in legal practice without documented, structured training was a breach of your competence obligations. How many firms would be in trouble by Friday?

More than would care to admit it.

That scenario has not happened. But the question is worth sitting with, because the direction of travel is clear, and the gap between where most firms are and where they need to be is widening faster than most managing partners seem to appreciate.

The announcement last week that Hotshot and Legora are partnering to produce workflow-specific training for law firms is a sensible, practical step. Hotshot builds legal education content. Legora is an AI tool already deployed firmwide at Linklaters and Goodwin. Combining use-case-driven video content with instructor-led workshops is exactly the kind of structured approach that moves the needle. It treats AI adoption as an education problem, not a technology problem.

That framing is correct. But it also raises a harder question that the industry keeps deferring.

What the SRA Standards Actually Say

The SRA Competence Statement requires solicitors to maintain the skills and knowledge needed to practise effectively. Paragraphs 2.1 to 2.3 of the SRA Standards and Regulations are unambiguous: you must keep your skills and knowledge up to date, and you must apply them appropriately. Those obligations are not limited to black-letter law. They apply to how you work.

If you are using an AI tool to draft contracts, review documents, or advise clients, and you do not understand how that tool generates outputs, what its failure modes are, or when it is likely to be wrong, you are not applying your skills appropriately. You are outsourcing your judgement to a system you cannot adequately supervise.

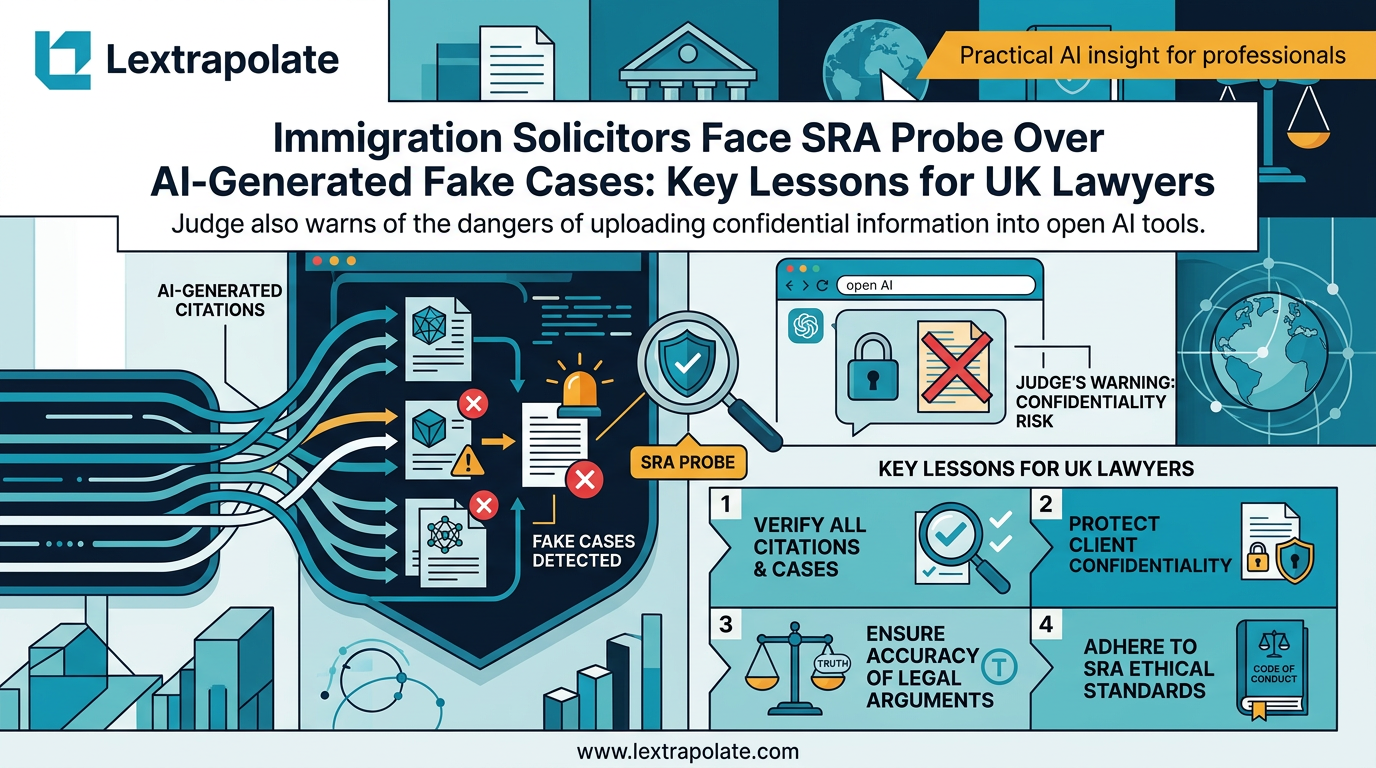

The SRA has not yet drawn that line explicitly in a published enforcement decision. But the logic is already there in the framework. The Ayinde case, in which a barrister relied on AI-generated case references that did not exist, demonstrated what happens when a professional treats AI output as reliable without verification. The court found the conduct seriously inadequate. The BSB's position tracks the same principle: competence requires understanding your tools.

At some point, and probably soon, a regulator will make that connection explicit. The firms that have invested in structured training will be ahead. The ones that handed out licences and hoped for the best will not.

Why Platform Training Is Not Enough

There is a version of AI training that firms do because it appears on the onboarding checklist. Watch a thirty-minute product video. Sign a form confirming you have read the acceptable use policy. Move on.

That version is nearly worthless.

The Hotshot-Legora model is different in at least one important respect: it focuses on specific legal workflows rather than generic AI concepts. A corporate associate learning how Legora handles due diligence document review in the context of an M&A deal is receiving information they can apply on Monday. That is materially different from a lecture on large language models that leaves the practitioner no clearer on what to actually do.

But even workflow-specific training has limits if it is not embedded in a culture that takes AI competence seriously. The tool changes. The use cases evolve. What Legora can do in February 2026 is not what it could do twelve months ago, and it will not be what it can do twelve months from now. Training therefore cannot be a one-time event. It needs to be a continuing obligation, tracked, refreshed, and supervised in the same way we treat CPD.

Competitors to Legora, including Harvey and August, are building similar training infrastructure. The market is converging on the view that training is part of the product. That is progress. It still does not resolve the question of who is responsible when the trained professional makes an error using a trained tool.

The Supervision Gap

Suppose a newly qualified solicitor at a firm with a firmwide Legora rollout completes the full Hotshot training programme. They understand the tool. They use it correctly for a document review task. The output contains an error. The error reaches the client. Who is responsible?

The answer under current professional rules is the supervising solicitor. Paragraph 4.1 of the SRA Code of Conduct requires adequate supervision of the work of those for whom you are responsible. That obligation does not diminish because AI was involved in the work product. If anything, it becomes more demanding, because the supervising solicitor must now assess not just the quality of the associate's analysis but also whether the AI-generated components have been adequately verified.

This is not a hypothetical risk. It is the current state of practice at every firm that has deployed these tools at scale. The question is whether supervision frameworks have been updated to reflect it.

Most have not. The rollout gets announced. The press release goes out. The training programme launches. The supervision guidance remains unchanged from 2019.

Structured training is necessary. It is not sufficient unless it is accompanied by equally structured supervision protocols that tell senior lawyers what they are actually responsible for checking.

What This Means on Monday Morning

If your firm has deployed an AI tool and you are a fee earner using it, three questions are worth asking this week.

First: have you received training that covers not just how to use the tool but when not to use it, and what outputs require verification before reliance? If not, request it. Document the request.

Second: does your supervision framework explicitly address AI-generated work product? If you are a partner supervising associates, your file review process should already be asking this. If it does not, raise it with your risk team.

Third: could you explain, in a regulatory investigation, what steps you took to ensure the AI output you relied upon was accurate? If the answer is "I assumed it was right", that is not a defensible position under the competence framework.

The Hotshot-Legora partnership is a welcome step in the right direction. But the underlying issue is not which training programme a firm chooses. It is whether the profession has accepted that competence now includes understanding your AI tools well enough to supervise, verify, and take professional responsibility for their outputs.

That acceptance is still patchy. The regulatory consequences of that patchiness will not be.

Firms that treat AI training as a compliance checkbox are building a liability. Firms that treat it as ongoing professional development, embedded in how work is supervised and reviewed, are building something more durable.

The SRA has not yet forced the issue. Assume it will.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

AI Hallucinations in Court: Why UK Lawyers Face Criminal Liability and How to Avoid SRA Sanctions

UK lawyers citing AI-fabricated cases risk contempt of court charges. With 24 documented incidents and counting, professional competence now demands technical literacy.

What If Your AI Tool Just Ended Your Career? The Citation Trap Lawyers Must Take Seriously

Two solicitors face SRA investigation after fake AI-generated case citations reached an Upper Tribunal. Here is what every lawyer needs to understand.

How Many Lawyers Actually Understand AI? Fewer Than You Think.

Most lawyers say they use AI. Very few can explain what it actually does. That gap is not just embarrassing: it is a professional risk.