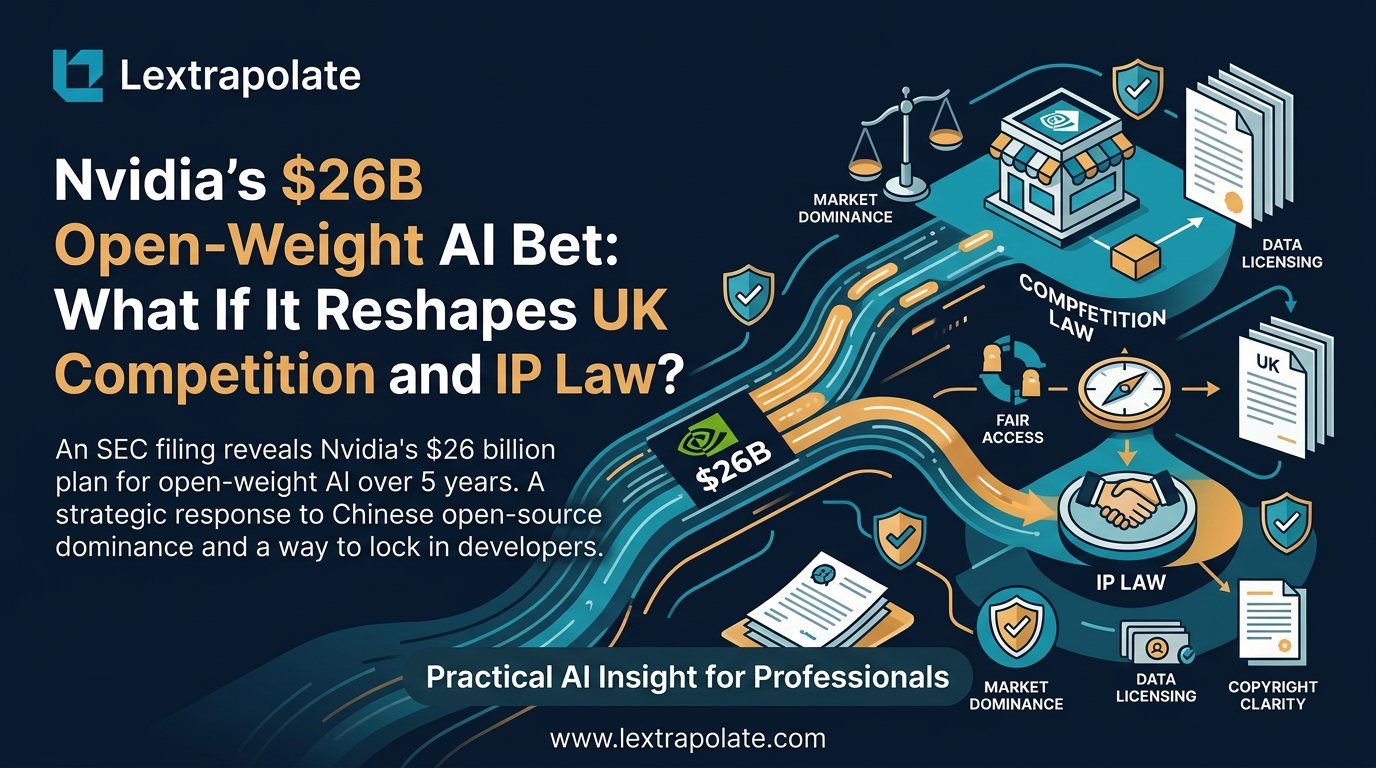

What if Nvidia Became the World's Biggest Open-Weight AI Supplier? What It Means for Law Firms Considering On-Premise AI

Nvidia is reportedly shifting $26 billion into open-weight AI model development—a move that could allow a UK law firm to run a GPT-4 class model entirely on its own internal servers. This investment signals a transition where the primary barrier to private, on-premise AI shifts from 'model capability' to simple 'hardware procurement'.

Reports circulating from technology press, citing SEC disclosure language, suggest Nvidia is planning to invest $26 billion over five years in open-weight AI model development. The detail is worth pausing on. Not $26 billion in chips. Not in data centres. In the models themselves. That is a significant strategic signal, even if the full picture is still emerging ahead of Nvidia's GTC 2026 conference.

This article is not a news report. The investment is reported but not yet independently verified at source level. What I want to do is treat it as a thought experiment: if a hardware giant of Nvidia's scale commits to open-weight AI at that magnitude, what does that mean for professional services firms thinking about private AI deployment? And specifically, what should UK law firms be thinking about?

Open-Weight Models and Why the Distinction Matters

Most people talk loosely about "open source AI". The more precise term is open-weight: models where the trained weights are publicly released, allowing anyone to download, run, modify, and fine-tune them without an API call, without a subscription, and without sending data anywhere.

The contrast with proprietary models is stark. When a firm uses GPT-4 via the OpenAI API, the query leaves the firm's infrastructure. OpenAI processes it. The firm relies on OpenAI's terms, OpenAI's uptime, OpenAI's pricing. For many matters, particularly anything touching client confidentiality, privilege, or sensitive commercial information, that arrangement carries real risk. The Solicitors Regulation Authority has been clear that solicitors remain responsible for client data regardless of which third-party tools they use.

Open-weight models flip this. The weights sit on hardware the firm controls. The model runs locally. Nothing leaves the building unless the firm chooses. For law firms, that architectural difference is not a minor technical detail. It is the difference between a usable tool and an unusable one for whole categories of work.

The barrier, historically, has been that open-weight models were simply not good enough. Meta's early Llama releases, Mistral's first models: capable, but not at the level professional services work demands. If Nvidia is genuinely channelling $26 billion into developing and funding open-weight models, and optimising them to run efficiently on Nvidia hardware, that capability gap narrows fast.

The Competition Law Dimension

Assume for a moment that Nvidia executes this strategy at scale. The firm ends up controlling the dominant AI chip hardware (currently estimated at around 80% of the AI accelerator market) and a major portfolio of the leading open-weight models. The models are optimised to run best on Nvidia's own GPUs. That combination deserves scrutiny.

Under the Enterprise Act 2002, the Competition and Markets Authority can investigate mergers and market structures where a party holds or is acquiring a dominant position that distorts competition. The CMA has already signalled appetite for examining AI market dynamics, including scrutiny of Microsoft's relationship with OpenAI. A scenario where Nvidia simultaneously controls infrastructure and the primary model layer would attract similar attention.

The more subtle concern is the ecosystem pull. Nvidia's commercial logic is transparent: if the best open-weight models run most efficiently on Nvidia chips, firms building private AI infrastructure have a strong incentive to standardise on Nvidia hardware. Open-weight does not mean neutral. The weights are free; the optimal hardware is not. That is a legitimate business model. It is also the kind of vertical alignment that regulators find interesting.

Data Protection and the Training Data Question

Open-weight models carry a second layer of complexity that matters for UK firms: the training data.

When a firm fine-tunes an open-weight model on its own documents, data protection questions arise under the UK GDPR. If the training corpus includes client documents containing personal data, the firm is processing that data for a new purpose. Is there a lawful basis? Has it been adequately de-identified? The ICO's guidance on AI and data protection makes clear that fine-tuning a model on personal data is processing, full stop, and must be justified accordingly.

There is also an IP dimension. Under the Copyright, Designs and Patents Act 1988, training a model on third-party text is potentially a restricted act. The government's proposed text and data mining exception has been contested. The current legal position in the UK remains unsettled. A firm fine-tuning a model on case law, publicly available judgments, or licensed legal databases needs to understand whether the licence for those materials extends to training use. Most do not.

None of this makes on-premise AI unworkable. It does mean the governance framework needs to be thought through before the technical deployment, not after.

What This Means

If you run a law firm, a chambers, or a legal operations function, here is what I would take from this scenario.

First, watch the open-weight model quality curve. It is moving fast. If Nvidia or any other well-capitalised actor accelerates model capability in the open-weight tier, the case for on-premise deployment strengthens materially. The technical barrier was always the bigger obstacle for most firms, not the governance one.

Second, do not wait for the models to arrive before building the governance framework. The data audit, the lawful basis analysis, the training data IP review: these take time and should not be done under commercial pressure. Firms that do this work now are positioned to move quickly when the right model becomes available.

Third, think about the hardware question separately from the software question. If on-premise AI is part of your three-year plan, the server infrastructure decisions you make now will constrain or enable what you can run. A conversation with your IT function about GPU capacity is not premature.

Fourth, the confidentiality argument for on-premise AI is compelling but needs to be made carefully. Not every cloud-based AI arrangement is incompatible with professional obligations. Some are. Knowing which is which requires legal analysis, not a general aversion to cloud tools.

The Bigger Picture

The AI market has been shaped, so far, primarily by a small number of proprietary model providers. If that changes, whether through Nvidia's reported investment, the continued maturation of existing open-weight models like Llama 3 and Mistral, or competitive pressure from Chinese open-source development, the market structure for professional AI deployment changes with it.

Law firms have good reasons to prefer private, controllable AI deployments. The technical capability to support that preference is arriving. The governance and legal frameworks that need to surround it are not especially complex, but they require deliberate attention.

The firms that will benefit most are not the ones that move first. They are the ones that move thoughtfully, with clear policies, proper training, and legal advice that reflects the actual state of the law rather than vendor marketing.

If you are thinking about on-premise AI deployment and want to work through the governance questions, I am happy to talk.

Sources

- 1Nvidia to Invest $26 Billion in Open-Source AI Models | Intellectia.AI

- 2Nvidia Targets Open AI Leadership With $26 Billion Model Development Plan

- 3Nvidia will invest $26 billion over five years to develop open weighted AI models

- 4Nvidia Bets $26B on Open-Weight AI Models to Challenge OpenAI

- 5NVIDIA to Fund Open-Weight AI Models With $26B Push

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

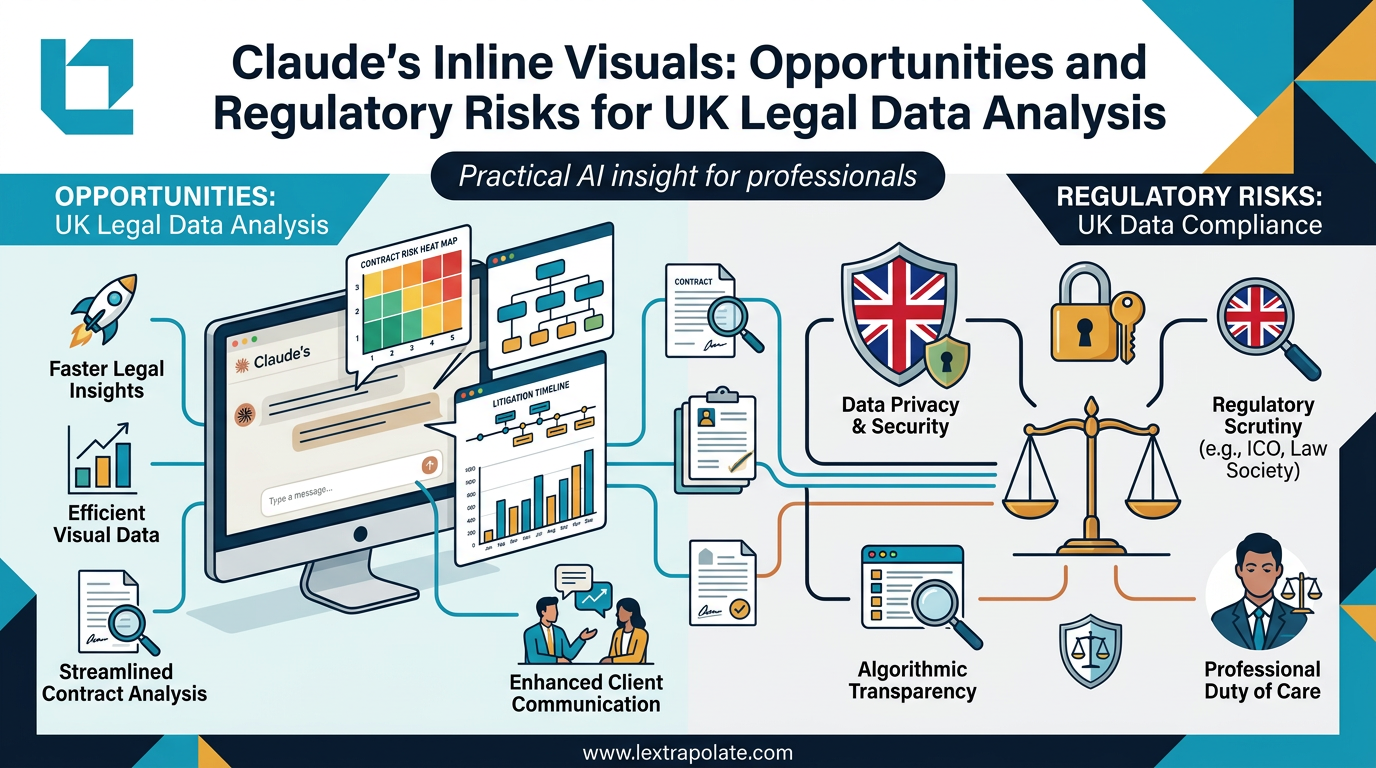

AI-Generated Visuals in Legal Work: Useful Shortcut or Regulatory Trap?

AI can now turn data into interactive charts mid-conversation. For lawyers, that's useful. It also raises questions about transparency, data protection, and professional responsibility.

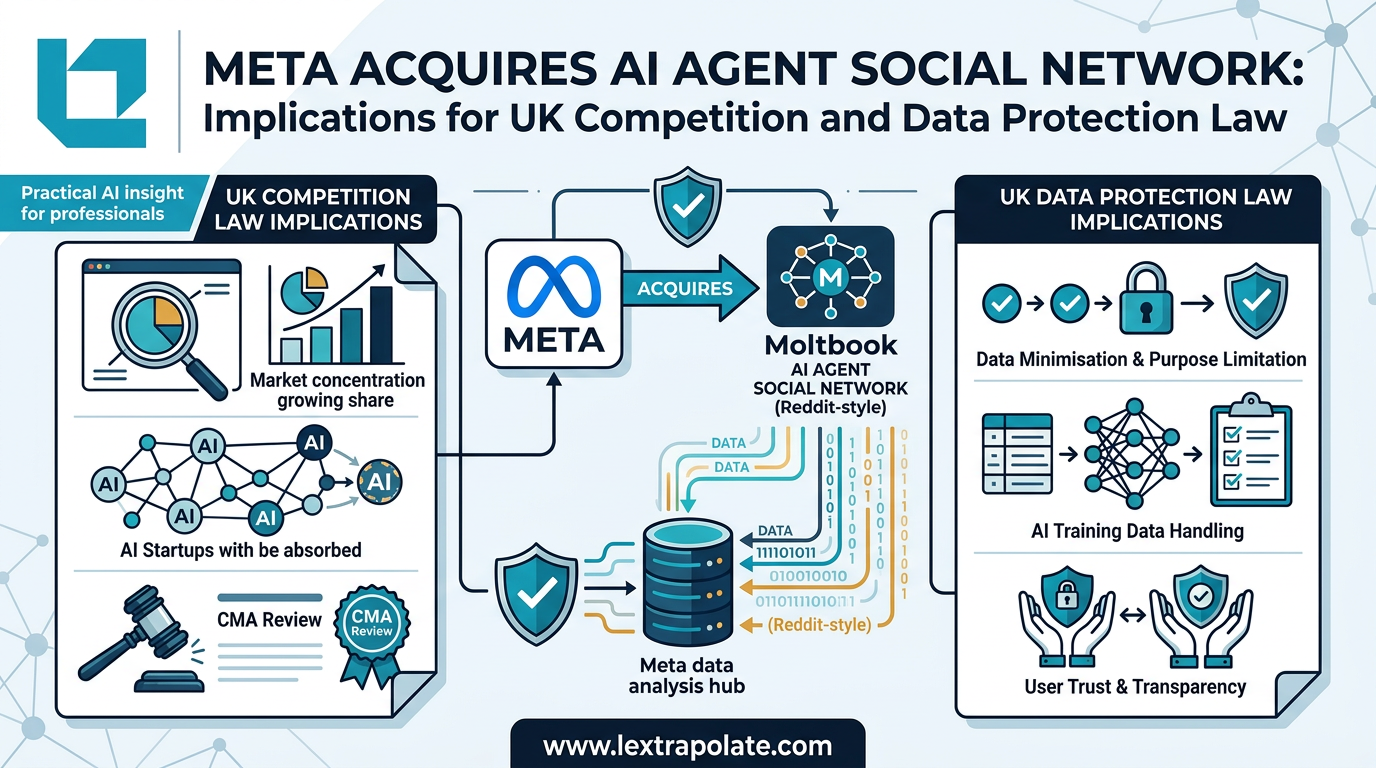

AI Agents Talking to Each Other: What It Means When Social Networks Go Autonomous

Meta has acquired a platform built for AI-to-AI communication. The regulatory and practical implications for UK professionals are worth examining carefully.

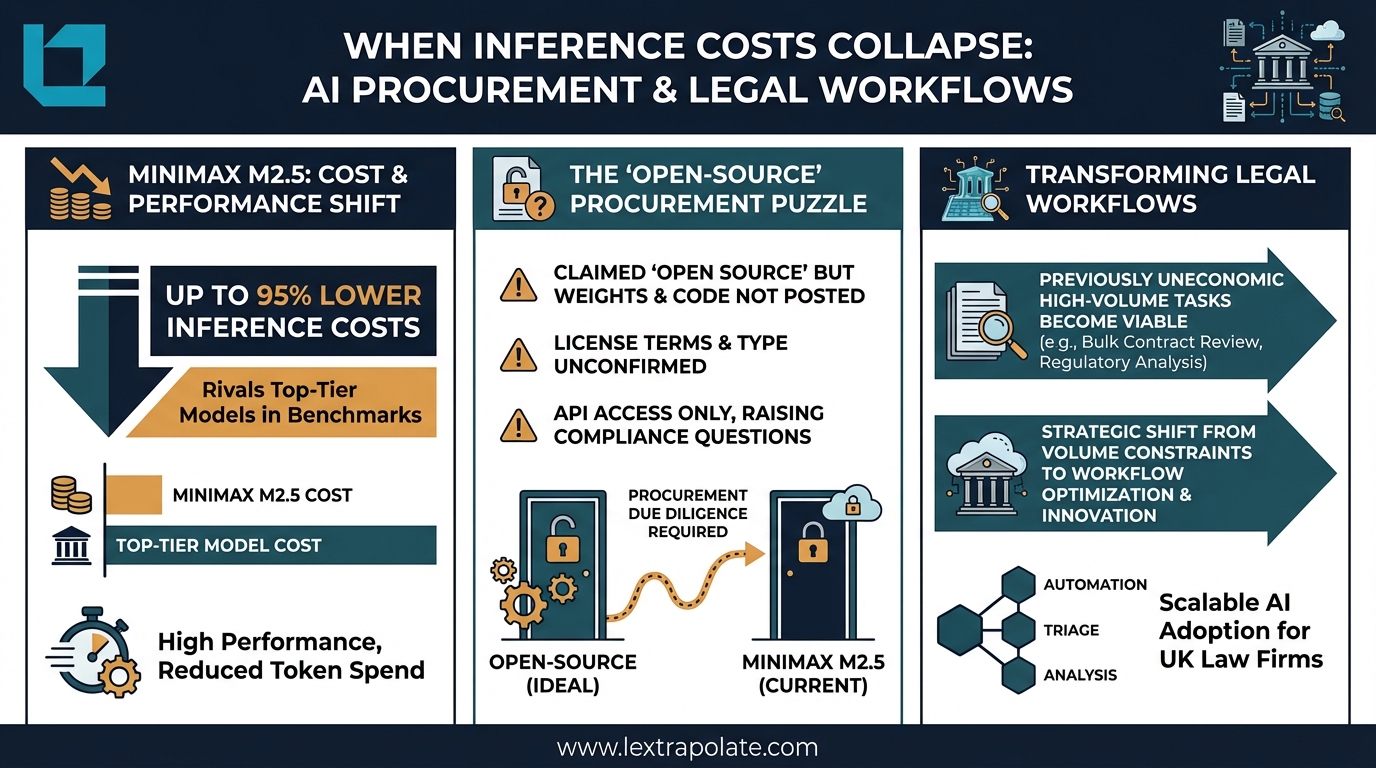

When Inference Costs Collapse: What Cheap AI Processing Means for Legal Workflows

AI inference costs are falling fast. For UK law firms running high-volume document work, that shift has real commercial and compliance implications worth thinking through now.