What If Your Firm Never Escapes Pilot Purgatory? The AI Adoption Trap Most Law Firms Are Already In

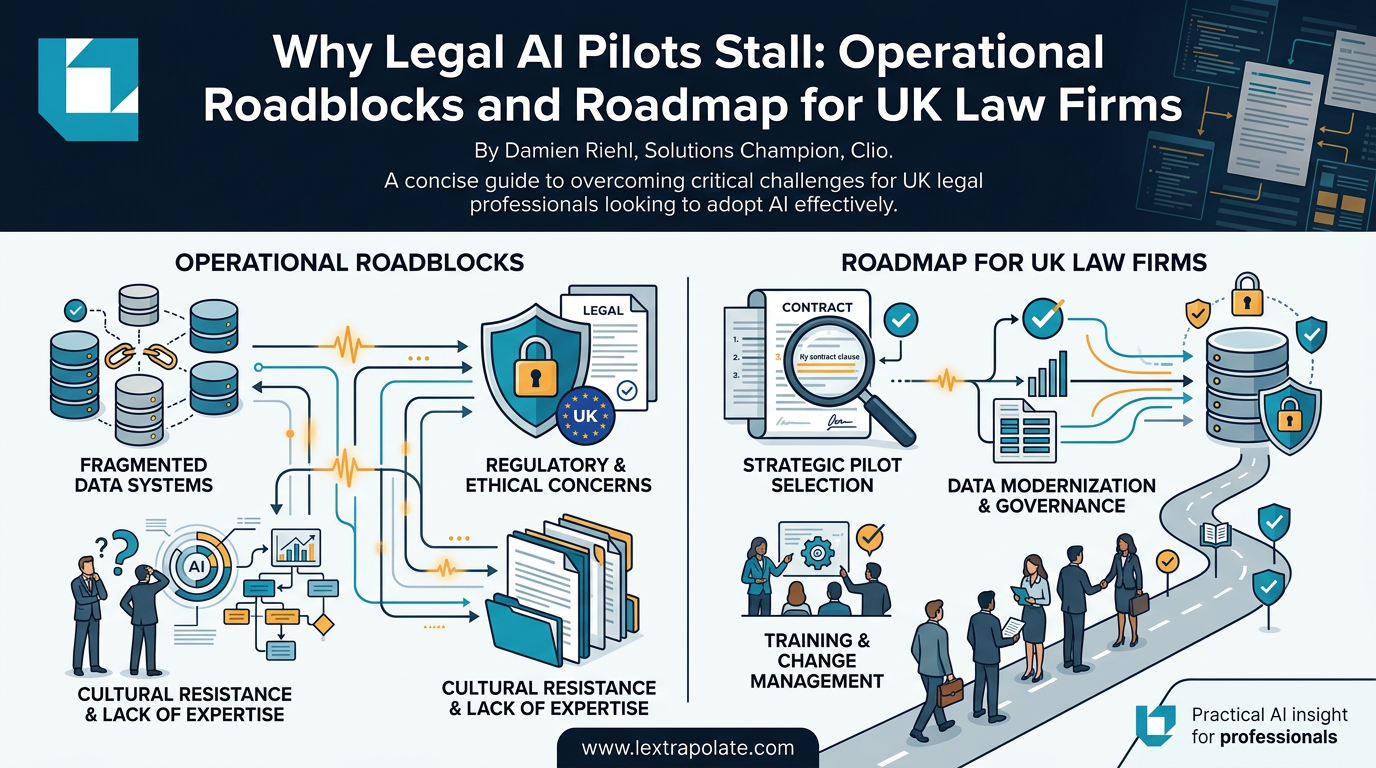

Legal AI adoption fails when firms treat software as a bolt-on rather than a catalyst for workflow redesign. While 95% of legal AI pilots fail to scale, the root cause is rarely the technology; it is the failure to adapt organisational processes to meet the new capabilities of generative tools.

According to Axiom Law, approximately 95% of legal AI pilots fail to scale beyond the initial trial phase. Thomson Reuters puts the figure differently but reaches the same conclusion: around 80% of legal AI investments fail to deliver the returns that justified the original business case. A survey reported by the Global Legal Post found that a majority of in-house legal teams remain stuck in the pilot phase, with no clear path to broader rollout.

The pattern is consistent enough that it deserves a name. Pilot purgatory. You authorise the spend, run the test, collect some anecdotal feedback, and then the momentum dies. The tool works. The organisation does not change around it.

The Failure Is Rarely Technical

This is the part that surprises senior partners who have invested in good technology. The model performs. The outputs are often genuinely useful. But performance in a controlled pilot bears almost no relationship to adoption at scale.

The reasons are structural. Pilots are typically run by enthusiasts: a small group of early adopters who self-select, who tolerate friction, who enjoy tinkering. When rollout extends to the rest of the firm, that tolerance evaporates. People revert to known processes, not because the AI is poor, but because nobody redesigned the workflow to accommodate it. The AI sits beside the existing process rather than inside it. This is not just an AI problem. There were plenty of examples of law firms that tried to introduce new ways of working, new efficiency-driving processes, yet their staff gradually reverted to type and old ways days or weeks after the initiative.

Launch Consulting's analysis of AI adoption barriers identifies lack of strategic alignment as the primary killer. The tool gets procured at one level of the organisation; the people who would use it daily had no input into the selection, no say in how it fits their work, and no training beyond a one-hour vendor webinar. That is not adoption. That is installation.

The Artificial Lawyer's coverage of Damien Riehl's analysis from Clio reinforces this point. The question firms have been asking for three years, namely whether AI can help with legal work, has already been answered. The question that now matters is whether the firm is willing to do the organisational work required to embed the answer into daily practice. Most are not.

The Governance Gap and Its Regulatory Teeth

There is a further problem that UK firms in particular should confront directly, because it carries regulatory risk.

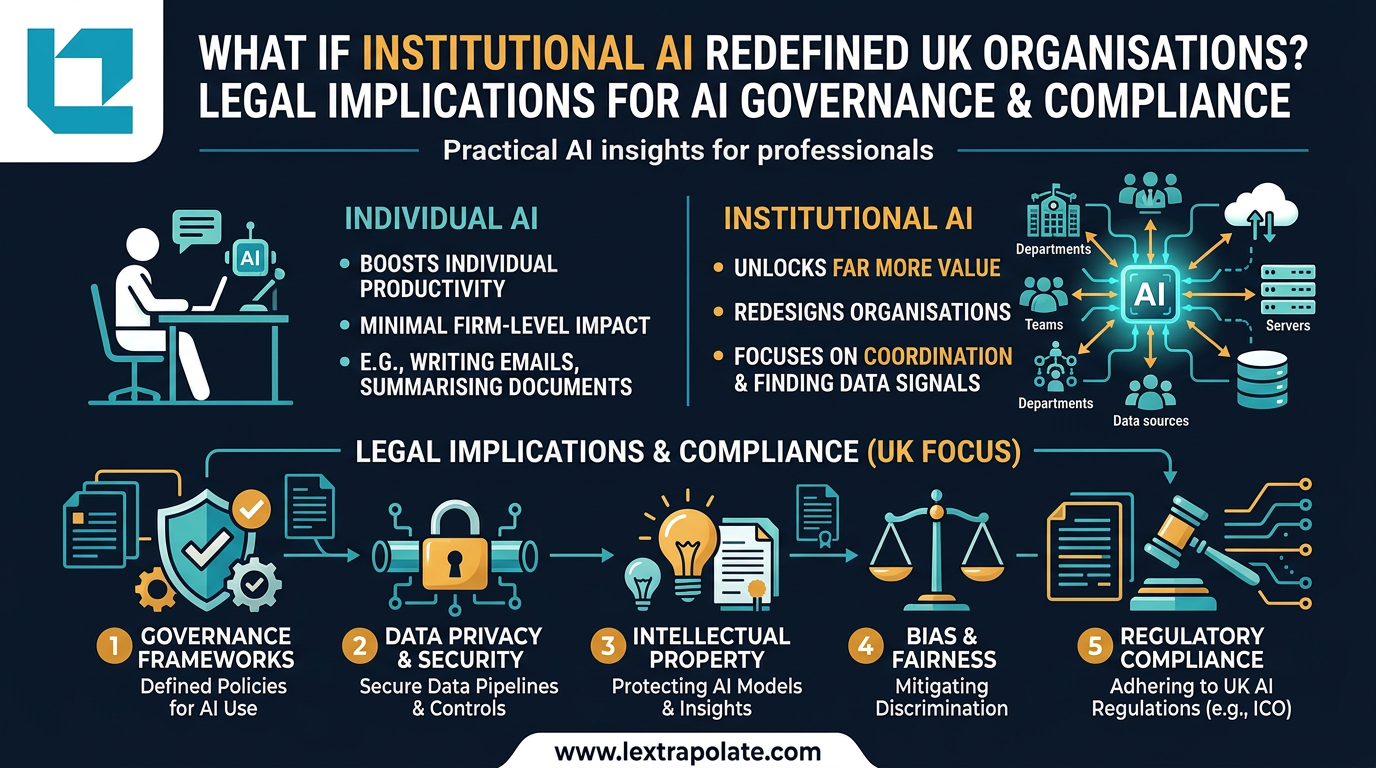

When a pilot runs without formal governance, the firm has typically made an implicit decision that the AI tool sits outside its compliance framework. That position is untenable under the SRA's Standards and Regulations. Rule 1.2 imposes a duty of confidentiality. The question of where client data goes when it passes through a third-party AI system, whether it is retained, whether it is used for model training, whether it is processed outside the UK is not a vendor assurance question. It is a professional obligation.

Rule 2.1 requires that solicitors maintain their competence. Rule 2.2 requires competence in the work being carried out. If a fee earner is using an AI tool to assist with advice and does not understand how that tool operates, what its limitations are, or when its outputs should be treated with scepticism, that is a competence question. The SRA has not yet issued definitive AI guidance, but the existing Standards are not silent on the point.

UK GDPR adds a further layer. Data processed by an AI system is personal data if it relates to an identifiable individual. Most legal work involves exactly that. A pilot that sends client information to an external AI platform without a proper data processing agreement, without a data protection impact assessment, and without any record of the decision is not just strategically careless. It is potentially a reportable breach.

The Global Legal Post survey found that 44% of in-house legal professionals cite security concerns as their primary barrier to AI adoption. That is not irrational caution. It reflects a genuine governance vacuum that firms have not yet filled.

What Actually Works

The firms making genuine progress share a set of characteristics that have nothing to do with which AI tool they chose.

They start with workflow analysis, not technology selection. Before procuring anything, they map the processes they want to improve. They identify the friction points, the repetitive tasks, the stages where human effort is being spent on work that does not require human judgement. That analysis tells them what capability they need. It also means that when the tool arrives, there is already a place for it to fit. They endeavour to understand what their teams are actually doing.

They invest in training that goes beyond product tutorials. There is a material difference between knowing how to use a tool and understanding when to trust it, when to check it, and when to override it. The Ayinde case illustrated what happens when a lawyer submits AI-generated content without verification. The problem was not the tool. The problem was an absence of critical judgement about how to use it. Training must build that judgement, not just demonstrate features.

They assign ownership. Somebody is responsible for the AI programme. Not the vendor. Not the IT department in isolation. A named person with authority, accountability, and the time to do the job properly. Without that, the programme drifts.

They measure the right things. Not whether people are using the tool, but whether the work is better, faster, or lower-risk as a result. Time saved on first drafts matters. Reduction in revision cycles matters. If the metric is login frequency, the programme will be managed to that metric and nothing else will improve.

The Monday Morning Test

If you read nothing else in this article, read this paragraph.

On Monday morning, pick one workflow. One. Not the most complex. Not the most impressive for a case study. The most repetitive, the most predictable, the most amenable to AI assistance. Map it in detail. Identify where AI fits, where it does not, and what a fee earner needs to know to use it safely. Then train specifically for that workflow, not for the tool in the abstract. Review the outputs after four weeks against a concrete measure.

That is it. Not a programme. Not a strategy document. One workflow, properly done.

The firms that scale AI successfully are not the ones who launched the most ambitious pilots. They are the ones who did one thing well, learned from it, and repeated the process. The technology is not the constraint. It has not been the constraint for some time.

The constraint is the willingness to do unglamorous organisational work: process mapping, governance documentation, targeted training, and honest measurement. None of that appears in a vendor demo. All of it determines whether the investment delivers anything at all.

If your firm is still in pilot purgatory twelve months from now, the reason will not be the AI.

Sources

- 1Why Legal AI Adoption Slows After Pilots - Artificial Lawyer

- 2Why 80% of legal AI government investments fail to deliver - Thomson Reuters

- 3Beyond the Hype: Why 95% of Legal AI Pilots Fail - Axiom Law

- 4AI Adoption Challenges: 8 Roadblocks and How to Overcome Them - Launch Consulting

- 5Majority of in-house legal teams still stuck in pilot phase of AI use - Global Legal Post

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

If AI Agents Become Your Workforce, What Happens to the Law Firm?

A hypothetical scenario playing out in China raises a serious question for legal practice: can a solo lawyer with AI agents compete with a mid-sized firm?

Your AI Is Working Hard. Your Firm Is Not: The Case for Institutional AI in Law

Individual AI makes lawyers faster. Institutional AI could remake law firms entirely. Most firms are pursuing the former and missing the latter.

The Lawyerless Law Firm: What SRA Approval of Technology-Only Practices Means in Practice

The SRA has now authorised two technology-only law firms. No solicitors, no advice, just automated workflows. That deserves serious scrutiny.