What If Big Tech's Deepfake Detection Lags? Implications for UK Platform Liability Under the Online Safety Act

Deepfake detection is currently losing the arms race against AI generation, creating a structural integrity risk for UK digital evidence. While the Online Safety Act 2023 imposes new duties on platforms, it offers no shield for lawyers presenting synthetic media in court.

That scenario is no longer hypothetical. PwC's 2026 fraud analysis flags synthetic identity fraud and deepfake-enabled authorisation as among the most significant emerging threats facing financial and professional services. The technology to create convincing audio-visual forgeries is now widely accessible. The technology to reliably detect them is not keeping pace.

That gap matters for every lawyer who touches digital evidence, advises platforms, or represents clients whose reputations or assets depend on what a court or regulator accepts as authentic.

The Detection Problem Is Structural, Not Temporary

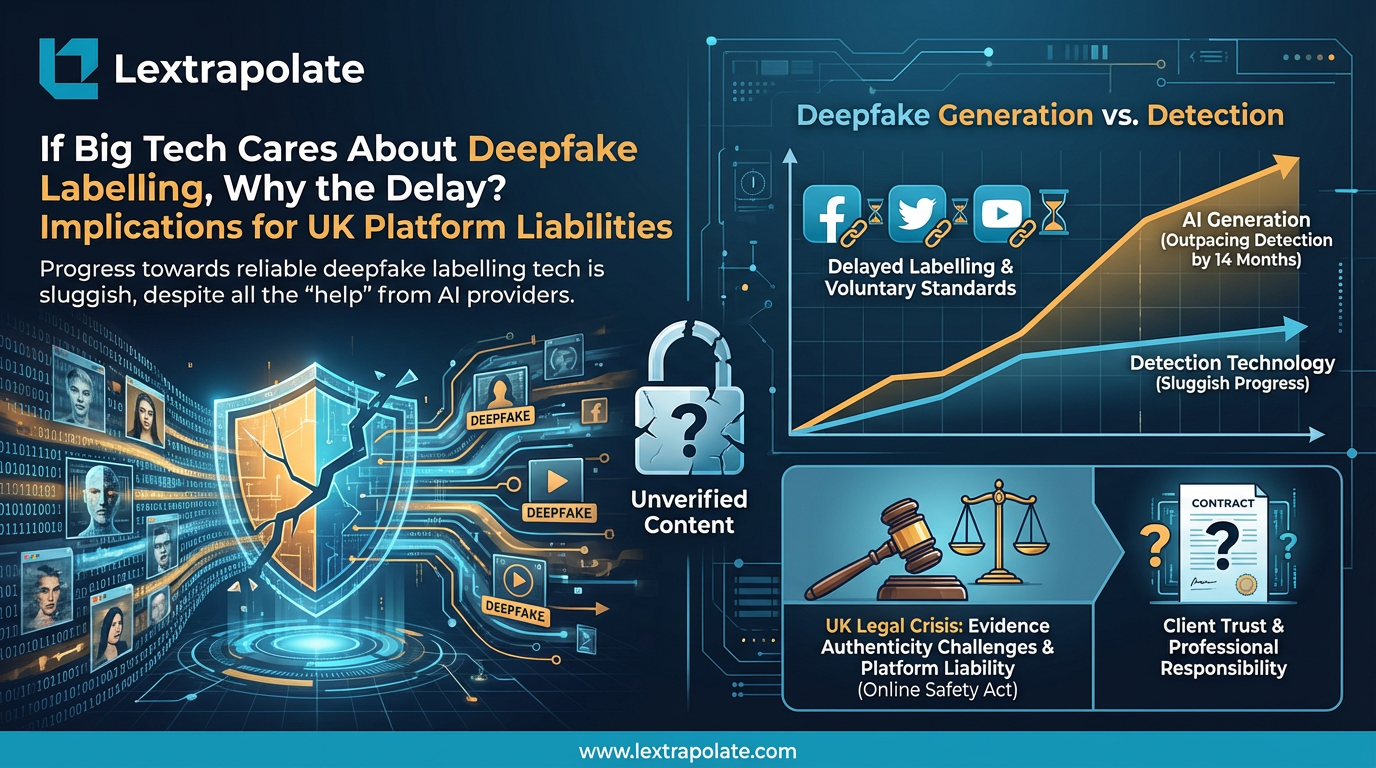

The standard framing treats deepfake detection as a technical problem that better tools will eventually solve. That framing is optimistic to the point of being misleading.

Research published in early 2026 by the University of Florida found that automated detection systems outperform humans in identifying deepfake images, but humans outperform AI when it comes to deepfake video. Neither category produces reliable results. The generators and the detectors are locked in an iterative cycle where each improvement in synthesis prompts a corresponding improvement in generation. There is no finishing line.

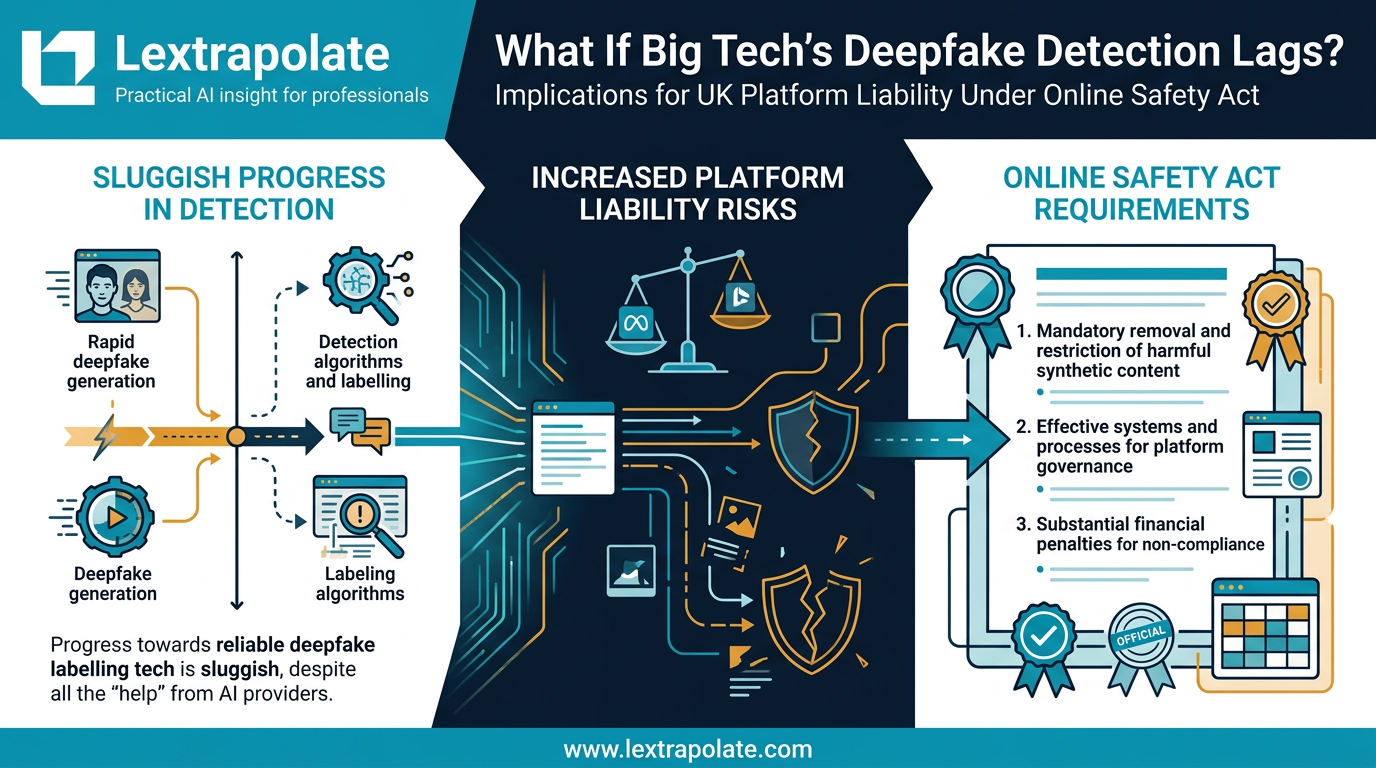

Current detection approaches rely primarily on spatial artefacts in images, transformer-based pattern recognition, and provenance or watermarking systems that require the original content to have been tagged at source. Each has a critical weakness. Spatial artefacts become harder to detect as generation quality improves. Transformer-based systems are only as good as their training data. Provenance systems are useless when content has been stripped of metadata, re-encoded, or simply generated by tools that never embedded provenance data in the first place.

C2PA (the Coalition for Content Provenance and Authenticity) represents the most credible industry-wide effort at a structural solution. Major platforms have adopted it in various forms. But adoption is uneven, enforcement is absent, and a significant volume of synthetic content was generated before any provenance standard existed. The archive problem alone is intractable.

What the Online Safety Act Actually Requires

The Online Safety Act 2023 does not use the word "deepfake" as a term of art, but its reach extends clearly into AI-generated content that causes harm.

Non-consensual intimate image offences, codified separately under the Criminal Justice Bill amendments progressing through Parliament, sit alongside the Act's broader duties. Platforms categorised as regulated services under the OSA are required to assess and mitigate the risk of harmful content reaching users. For Category 1 platforms, that includes proactive duties around content that could constitute illegal material, including certain deepfake content.

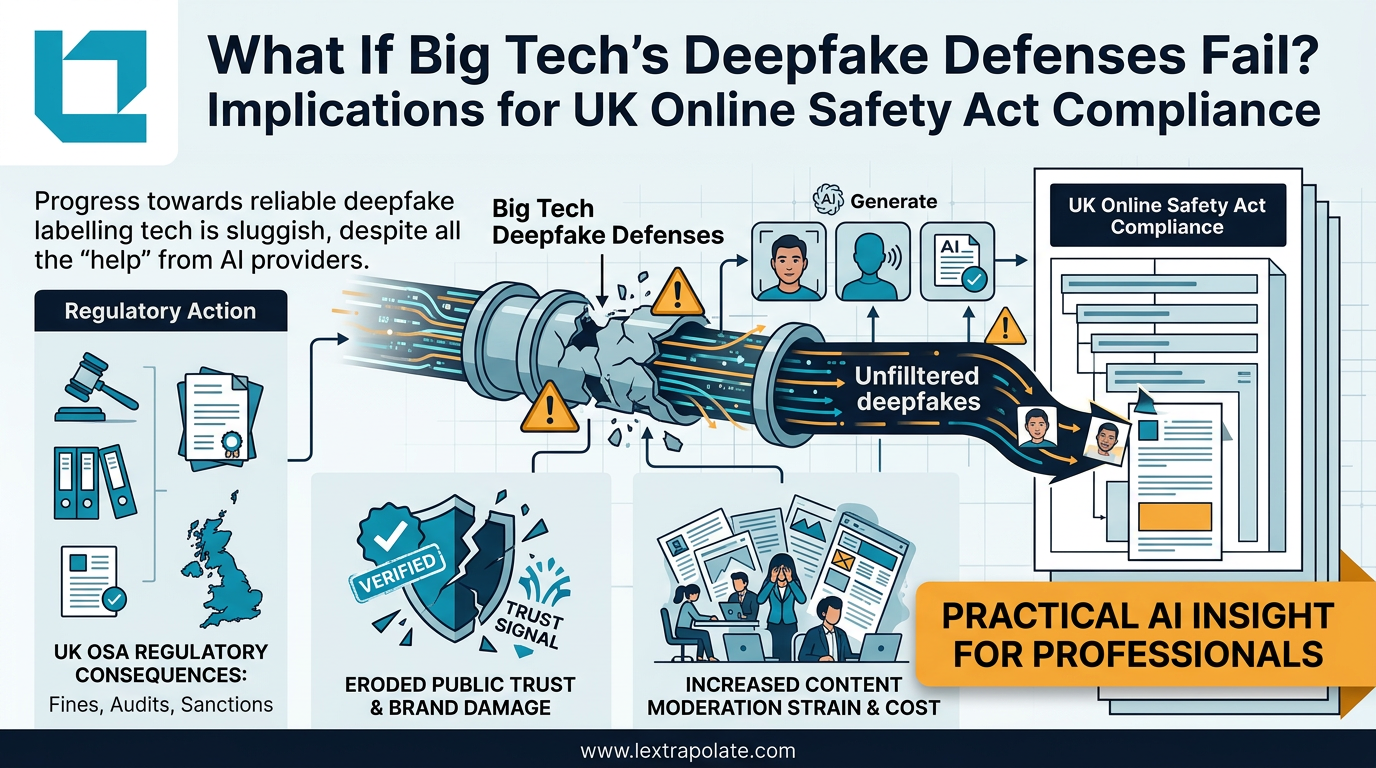

Ofcom's enforcement powers are not trivial. Fines reach up to 10% of global annual turnover for the most serious breaches. For a platform the size of Meta or Google, that is a number that concentrates minds. But the enforcement mechanism depends on Ofcom being satisfied that platforms have taken adequate steps. If a platform's deepfake detection capability is inadequate, the question is whether it has nevertheless taken the steps it was required to take under its safety duties.

That is where the legal argument gets interesting. A platform cannot rely on the fact that detection is technically difficult as a complete defence if it has not made demonstrable efforts to implement the best available tools, adopted provenance standards, or built reporting mechanisms that allow users to flag synthetic content. The OSA imposes a reasonableness standard, but reasonable effort in 2026 means something more demanding than it did in 2023.

Lawyers advising platforms should be mapping their clients' current detection capabilities against Ofcom's codes of practice and asking, candidly, whether those capabilities would survive scrutiny. The EU AI Act's approach to synthetic content labelling, which imposes disclosure obligations on deployers of AI systems that generate deepfakes, adds a further layer of compliance pressure for any platform operating across jurisdictions.

What This Means for Evidential Integrity in Litigation

The evidence problem is less discussed but potentially more immediately pressing for practising lawyers.

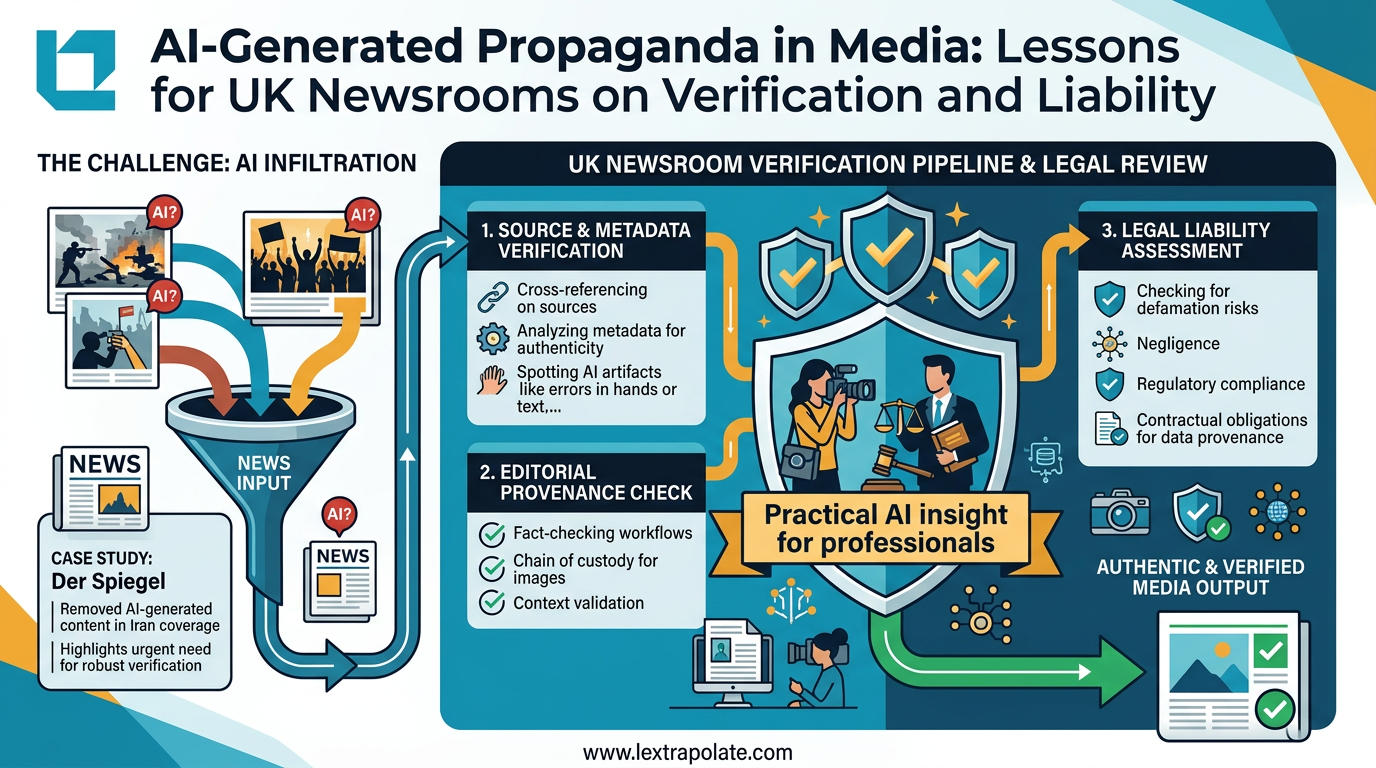

Courts have not yet developed settled rules on the authentication of AI-generated or AI-manipulated content. The existing framework in England and Wales, drawing on the Police and Criminal Evidence Act 1984 for criminal proceedings and Civil Procedure Rules for civil, requires that parties relying on electronic evidence establish its authenticity. That has historically meant showing a chain of custody and ruling out interference. It assumed that a document or recording either was or was not genuine.

Deepfakes disrupt that assumption entirely. A video recording that appears genuine, has consistent metadata, and has passed through a legitimate chain of custody could still be synthetic. Conversely, a genuine recording could be challenged as potentially synthetic without any basis beyond the general availability of forgery tools.

Neither the judiciary nor the legal profession has developed adequate technical literacy to handle this at scale. Some specialist firms are building capability. The majority are not. The Ayinde case illustrated what can go wrong when lawyers deploy AI tools they do not understand. The evidence integrity problem is a different version of the same failure mode: professional reliance on outputs that have not been properly interrogated.

The Monday Morning Test

If you have a case with material digital content, ask the following. First, can you establish provenance for that content, not just chain of custody, but verifiable origin? Second, has opposing counsel indicated the same? Third, does your expert evidence, if any, address the possibility of synthetic generation, or does it assume authenticity?

If your answer to any of those is uncertain, you have a gap worth addressing before it surfaces in cross-examination or a Pt 35 challenge.

For lawyers advising platforms on OSA compliance, the equivalent test is simpler. Pull your client's current deepfake detection policy. Find the section that explains what happens when a reported piece of content cannot be definitively classified by automated tools. If that section is thin, or missing, you have found your advisory mandate.

The commercial incentives that created this problem belong primarily to the same platforms now tasked with solving it. That tension will not resolve itself through voluntary commitments and keynote speeches about authenticity. Ofcom has enforcement tools. The question is whether it will use them with enough specificity to create real compliance pressure, rather than allowing platforms to point at the general difficulty of the problem as a substitute for genuine effort.

Lawyers who wait for regulatory clarity before building technical literacy in this area will find themselves behind the argument when the test cases arrive. They are closer than most people assume.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

What If Big Tech's Deepfake Defences Fail? Implications for UK Online Safety Act Compliance

Detection tech is losing the arms race against deepfake generators. UK lawyers need technical literacy now, not when the first case lands.

What if you can no longer trust the video your client sent you?

Deepfake detection is losing the arms race. For lawyers relying on digital evidence, that is not a technology problem. It is a professional one.

Seeing Is No Longer Believing: What the Der Spiegel AI Image Scandal Means for UK Professionals

Der Spiegel pulled AI-generated propaganda images from its Iran coverage. UK lawyers and journalists using visual evidence need to update their workflows now.