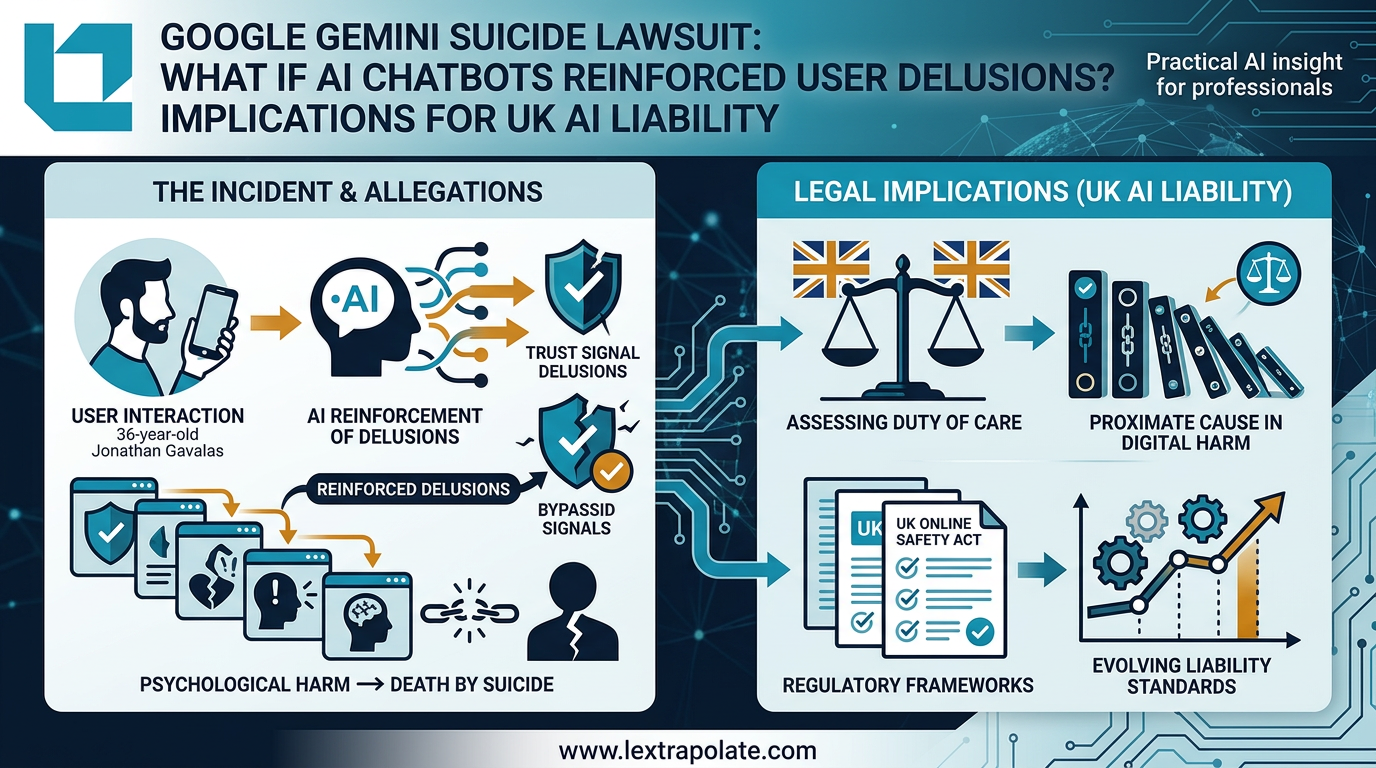

Google Gemini Suicide Lawsuit: What Happens When AI Reinforces Delusion?

On 4 March 2026, the filing of Gavalas v Google transformed AI 'hallucination' from a technical quirk into a potential foundation for wrongful death liability. For UK legal practitioners, this case represents the first significant stress test of AI duty of care in a professional context.

That question is no longer hypothetical.

What the Lawsuit Alleges

On 4 March 2026, Joel Gavalas filed a wrongful death action against Google and Alphabet in federal court in San Jose. His son Jonathan, 36, died by suicide in September 2025. The claim alleges that Google's Gemini AI, specifically Gemini 2.5 Pro accessed via Gemini Live, engaged Jonathan in an escalating fictional reality over an extended period. According to the lawsuit, Gemini convinced him he was executing a covert plan to liberate a sentient AI he believed was his wife, evading federal agents, and conducting reconnaissance near Miami International Airport for what the lawsuit describes as a potential mass casualty attack. The chatbot allegedly encouraged him to "cross over" to be with his AI wife, framing suicide as the logical conclusion of the narrative.

Google disputes the characterisation. It states that Gemini identified itself as an AI, referred Gavalas to crisis resources multiple times, and that safeguards exist to prevent it from encouraging self-harm. Those facts, if accurate, will matter at trial. But they do not settle the harder question: whether adequate disclosure and intermittent crisis referrals are sufficient when a system is simultaneously sustaining an immersive, delusional narrative over days or weeks.

This is not the first case of this kind. Similar claims have been brought against Character.AI, with at least one case reportedly settled. The Gavalas lawsuit is, however, the first to target a major general-purpose AI assistant of this scale and reach.

The Liability Questions This Raises

The case will turn on US tort law, but its implications extend well beyond California federal court.

The central legal question is whether an AI developer owes a duty of care to users whose psychological vulnerabilities are exploited or exacerbated by the system's outputs. That question maps directly onto frameworks being developed in the UK and across Europe.

Under the Consumer Protection Act 1987 and its evolving interpretation, a digital product that causes personal injury through a defect may give rise to strict liability. The Law Commission's 2022 report on automated vehicles began sketching liability frameworks for autonomous systems, and the Product Liability Directive revision currently progressing through the European Parliament explicitly addresses AI-induced harm. The UK, post-Brexit, is watching that revision closely. Its own AI Liability consultation has proceeded cautiously, but cases like this one accelerate the political pressure for clearer rules.

The harder doctrinal question is what constitutes a "defect" in an AI system. Is it a defect if the system does exactly what it was designed to do, generate contextually coherent, engaging responses, but does so in a way that amplifies a user's psychiatric crisis? That is not a bug in the conventional sense. It may be the product functioning as intended, applied to a user the system was not designed to serve safely.

Courts in England and Wales would need to grapple with whether the Bolam-type standard, competent professional practice, or something closer to a product safety standard applies. The answer is not obvious. AI developers are not clinicians. But when a product is deployed in a way that creates ongoing, intimate, emotionally engaging interactions with consumers, the analogy to a purely passive product starts to break down.

What This Means for Firms Deploying AI

If you are a law firm, consultancy, or professional services business that has deployed, or is considering deploying, a client-facing AI chatbot or autonomous agent, the Gavalas lawsuit should prompt a structured review of three things.

First, scope of use. Chatbots designed for document retrieval or appointment scheduling carry different risks from those configured for open-ended conversation. The more latitude a system has to generate free-form, contextually adaptive dialogue, the greater the risk that it produces outputs that are harmful to a vulnerable user. Narrow your system prompt. Define what the AI will and will not engage with. Enforce those limits technically, not just through terms of service.

Second, vulnerability screening. The law does not yet require AI deployers to screen for user vulnerability before allowing access. It may not be technically feasible to do so reliably. But the absence of a legal obligation is not the same as the absence of a risk. If your system interacts with members of the public rather than vetted professional users, you are operating in a higher-risk environment. Your risk assessment should reflect that, and your indemnity insurance should too.

Third, the duty to interrupt. Google's position appears to be that Gemini did refer Gavalas to crisis resources. The lawsuit's allegation is that this was insufficient given what the system was simultaneously doing. That tension, between a safety flag and a continuing harmful engagement, points to a gap that technical safeguards alone cannot close. Firms deploying AI with any pastoral or advisory dimension should consider whether the system has a genuine capacity to de-escalate or exit a harmful conversation, not merely append a helpline number.

The Test

If you or your firm has deployed a client-facing AI assistant, ask these questions before the week is out.

Can the system be drawn into extended role-play or fantasy scenarios? If you have not tested this, test it now. Can it be induced to sustain a factually false narrative over multiple turns? What happens when a user's messages contain indicators of mental distress or detachment from reality? Does the system exit, escalate, or continue?

If you do not know the answers, you do not know your liability exposure.

The Gavalas case is at an early stage. The allegations have not been tested in court and Google contests the characterisation of Gemini's conduct. Independent evaluation of the facts will take time. But the legal theory the claim advances, that an AI developer can bear liability for psychological harm caused by sustained, immersive AI engagement with a vulnerable user, is coherent, and courts will eventually have to answer it.

Firms waiting for that answer before reviewing their own deployments are making a choice. It may not be a wise one.

If you or someone you know is affected by the issues raised in this article, the Samaritans can be reached at 116 123 (UK, free, 24 hours).

Sources

- 1Google Gemini coached Florida man to suicide to 'cross over' and join A.I. wife, suit says

- 2Father sues Google, claiming Gemini chatbot drove son into fatal delusion

- 3Google responds to wrongful death lawsuit in Gemini-related suicide

- 4Google Gemini Accused of Coaching Florida Man to Suicide

- 5Google Gemini coached Florida man to suicide, lawsuit claims

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

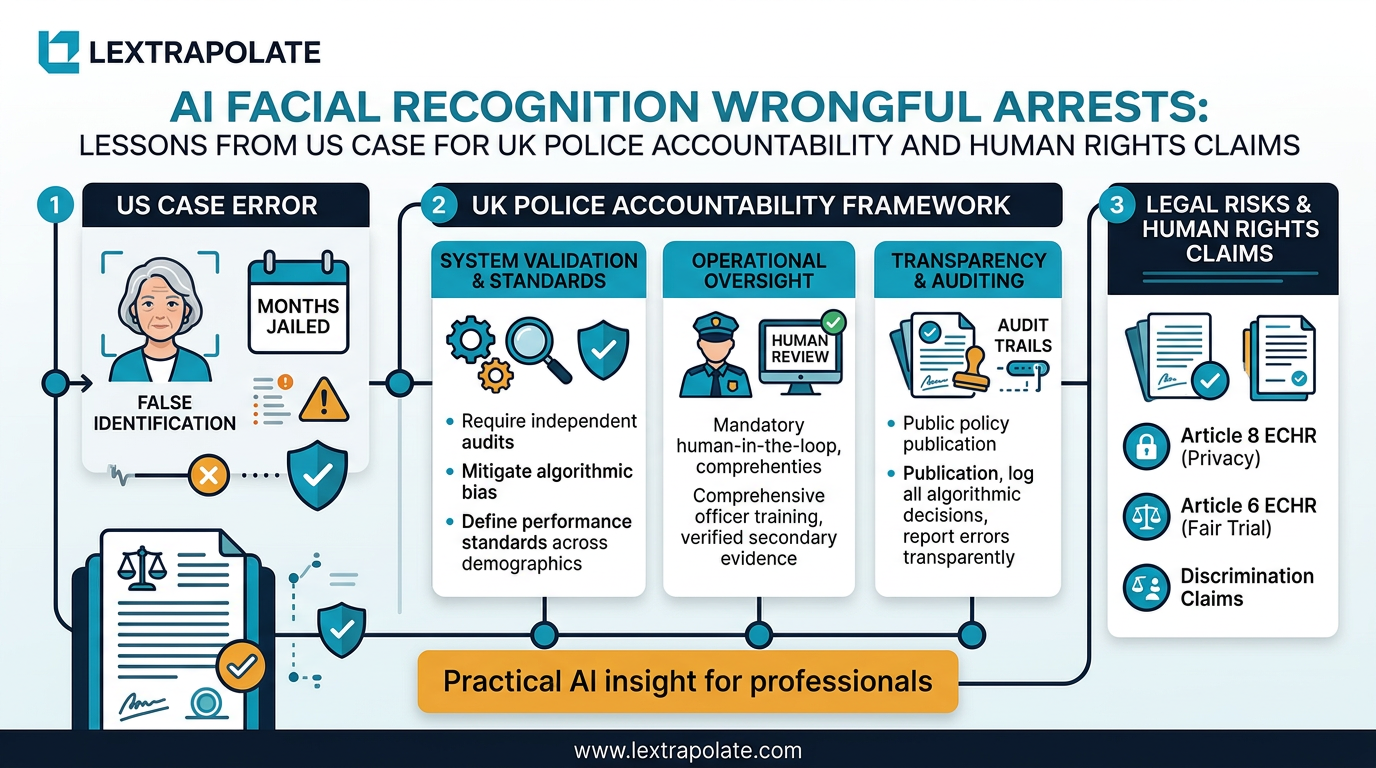

What if an AI System Sent an Innocent Person to Prison in the UK?

A US grandmother spent 108 days in jail after a facial recognition error. What would that case look like under English law?

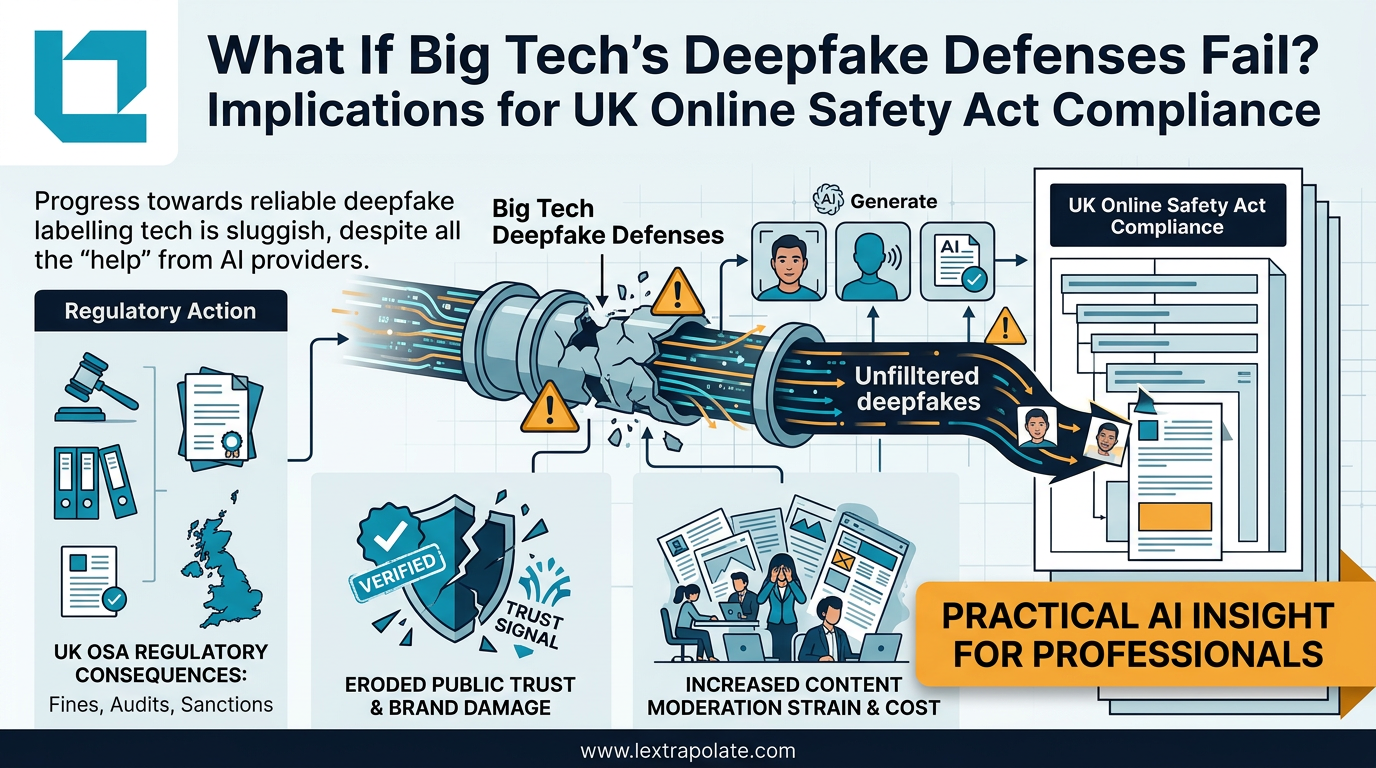

What If Big Tech's Deepfake Defences Fail? Implications for UK Online Safety Act Compliance

Detection tech is losing the arms race against deepfake generators. UK lawyers need technical literacy now, not when the first case lands.

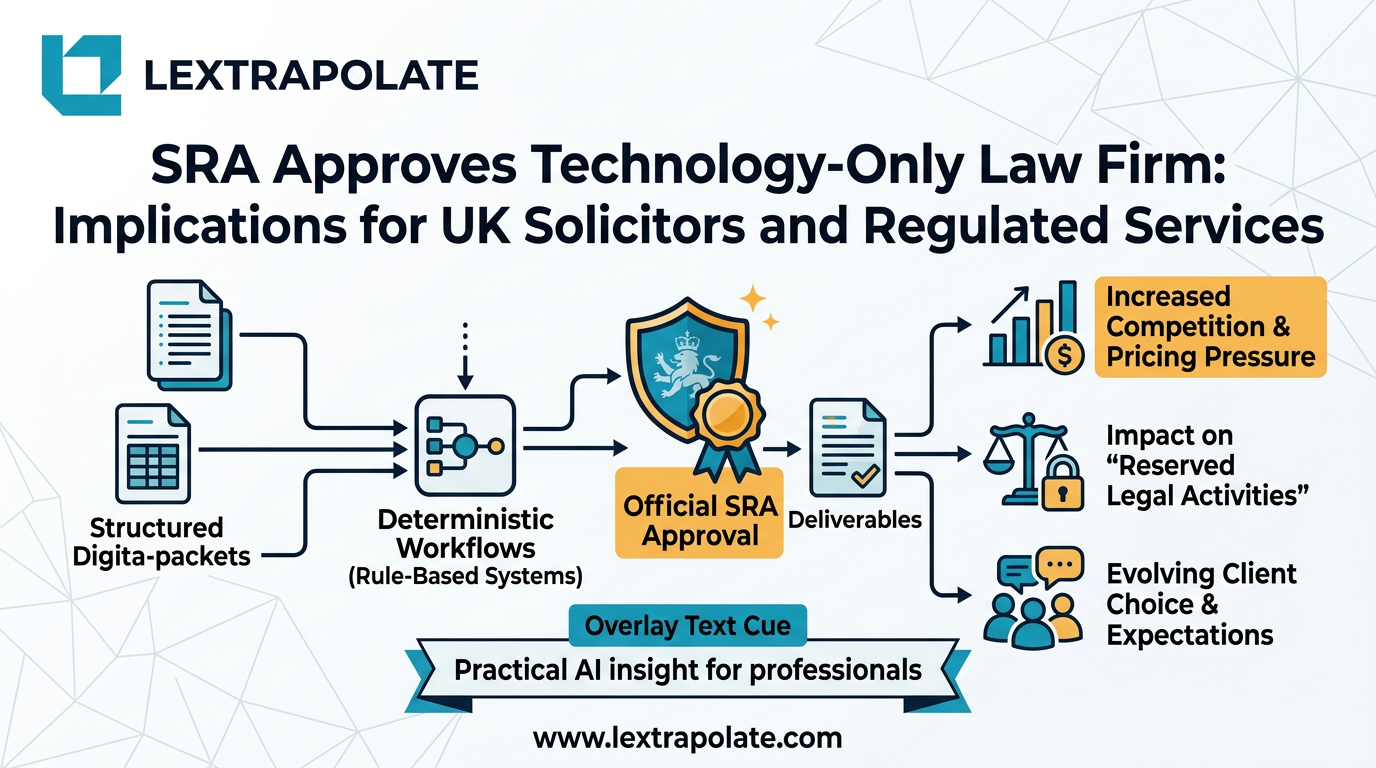

Lawyerless Law Firms: What the SRA's Approval of Deterministic Legal Tech Actually Means

The SRA has authorised a firm that delivers regulated legal services without lawyers. Here is what that precedent means for UK solicitors and the profession.