What if an AI System Sent an Innocent Person to Prison in the UK?

Under English law, the presumption that computers are working correctly is a legal fiction that led to the Horizon scandal. If a facial recognition system incorrectly flags a suspect today, PACE 1984 and the Criminal Procedure Rules offer surprisingly few safeguards against an AI-driven wrongful arrest.

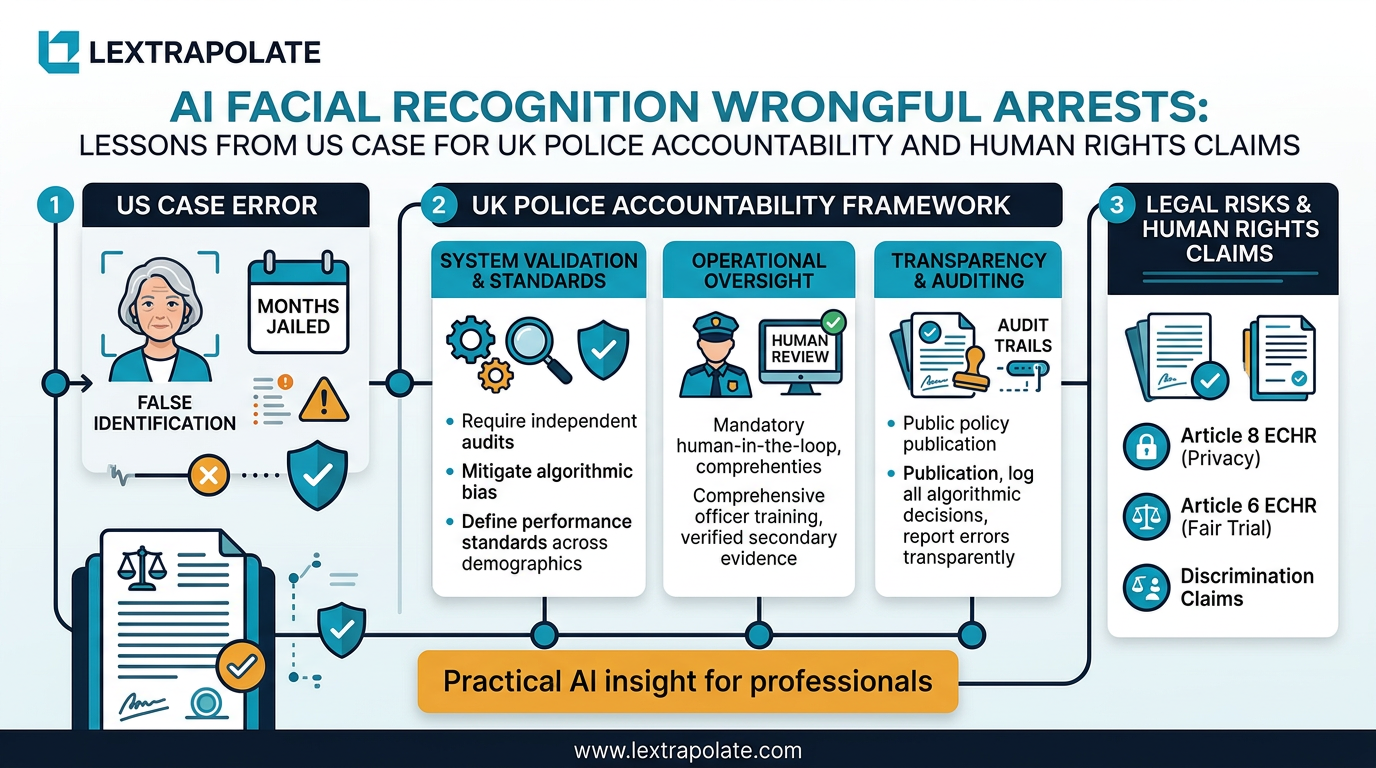

This is not a hypothetical conjured from thin air. It is a close description of what happened to Angela Lipps, a grandmother in Tennessee, who was arrested in July 2025 by US Marshals on the basis of an AI facial recognition match linking her to bank fraud in North Dakota. She spent 108 days in custody before the case collapsed. According to reporting from WDAY and confirmed by a Washington Post investigation into the pattern, her case was the eighth documented wrongful arrest in the United States attributable to facial recognition error.

The question for practitioners in England and Wales is straightforward: what would that case look like here?

The Legal Framework AI Evidence Would Have to Satisfy

Start with the Police and Criminal Evidence Act 1984. PACE and its Codes of Practice impose obligations on investigators to pursue all reasonable lines of enquiry, including those that point away from the suspect. Code B and Code D govern searches and identification procedures respectively. An identification made by algorithm rather than witness falls outside the existing identification parade regime entirely. There is no Code D procedure for facial recognition matches. That gap is not a technicality. It means there is no standardised process for challenging the reliability of the match, no mandatory disclosure of the system's error rates, and no established threshold below which a match is treated as insufficient to ground an arrest.

If an officer arrests on the basis of a facial recognition match without checking whether the suspect could have been present at the scene, that starts to look like an arrest without reasonable grounds. Section 24 of PACE requires reasonable suspicion. A single algorithmic output, unverified against travel records, financial data, or witness accounts, is a thin basis for that suspicion. Whether it crosses the threshold would depend on the facts, but the Angela Lipps scenario would strain the argument considerably.

Article 5, Article 8, and the Human Rights Exposure

Assume the arrest was made and the person was remanded. Article 5 of the European Convention on Human Rights, incorporated by the Human Rights Act 1998, protects against arbitrary detention. Detention is lawful under Article 5(1)(c) if there is reasonable suspicion of an offence. If the suspicion derives from a flawed algorithmic output and no corroborating inquiry was made, the detention is vulnerable to challenge.

The South Wales Police facial recognition litigation, decided in the Court of Appeal in 2020, is the closest domestic authority. In R (Bridges) v Chief Constable of South Wales [2020] EWCA Civ 1058, the court found that South Wales Police's use of automated facial recognition breached the Data Protection Act 2018 and lacked a sufficiently clear legal basis. The court did not need to reach the Article 5 argument because the statutory framework alone was sufficient, but the judgment signals judicial scepticism toward opaque algorithmic processes in policing contexts.

An Article 8 claim would also arise from the collection and processing of biometric data. Processing biometric data for identification purposes engages the right to private life. If the processing was not carried out in accordance with law, or was disproportionate, a claim under Article 8 runs alongside the data protection argument. In a scenario resembling the Lipps case, those claims would not be fanciful. They would be well-founded.

What Prosecutors and Defence Lawyers Should Be Doing Now

Criminal practitioners need to treat AI-generated evidence with the same rigour applied to any other expert output. The admissibility of expert evidence under the common law requires that it be reliable, that the methodology be sound, and that it assist the tribunal of fact rather than usurp it. There is no good reason why a facial recognition match should escape that scrutiny simply because it is produced by a machine rather than a witness.

Defence solicitors and barristers should be pressing for disclosure of the system used, its documented error rates, the training data, and any known performance differentials across demographic groups. The peer-reviewed literature is consistent: facial recognition systems perform less accurately on women, older people, and individuals with darker skin tones. A match involving a woman in her sixties should attract particular scrutiny. That is not speculation. It is what the data shows.

Prosecutors have independent obligations. The duty under the Criminal Procedure and Investigations Act 1996 to disclose material that might undermine the prosecution case extends to information about the reliability of the tools used to identify the suspect. Suppressing known error rates would not survive appellate scrutiny and should not survive the ethics of disclosure.

For solicitors advising clients at the police station, the immediate question on receiving instructions about a facial recognition-led arrest is whether any independent verification was conducted. If the answer is no, you have a significant bail argument and potentially the foundation of a false imprisonment claim.

The Test

If you are a criminal practitioner, here is what is immediately actionable.

Review any case involving identification evidence and ask whether any part of that identification was assisted by algorithmic tools. If you are not sure, ask the disclosure officer. If a facial recognition system was used, request the system documentation as unused material. Challenge the absence of corroborating verification as a failure of the reasonable lines of enquiry obligation under PACE.

If you advise police forces or prosecution authorities, consider whether your existing identification procedures adequately address algorithmic matches. They almost certainly do not. The gap between Code D and the reality of facial recognition deployment is not theoretical. It is the gap that swallowed 108 days of Angela Lipps's life.

The Deeper Problem

The Lipps case illustrates something that goes beyond one wrongful arrest. It shows what happens when a system produces an output, an institution treats that output as sufficient, and no one in the chain stops to ask whether the machine might be wrong.

Every professional in every field faces this pressure as AI tools proliferate. The temptation to treat a confident algorithmic output as a conclusion rather than a starting point is real, and it is understandable given workload pressures. But in criminal justice, the cost of that shortcut is measured in months of liberty wrongly taken.

The law in England and Wales already contains the tools to challenge this. PACE, the Human Rights Act, the Data Protection Act 2018, and the common law on expert evidence all provide grounds for scrutiny. What is needed is not new legislation but practitioners willing to use existing tools properly and courts prepared to hold the line on reliability.

An algorithm that is wrong eight times is not a reliable identification witness. Courts should say so. Until they do, the work falls to the lawyers in the room.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

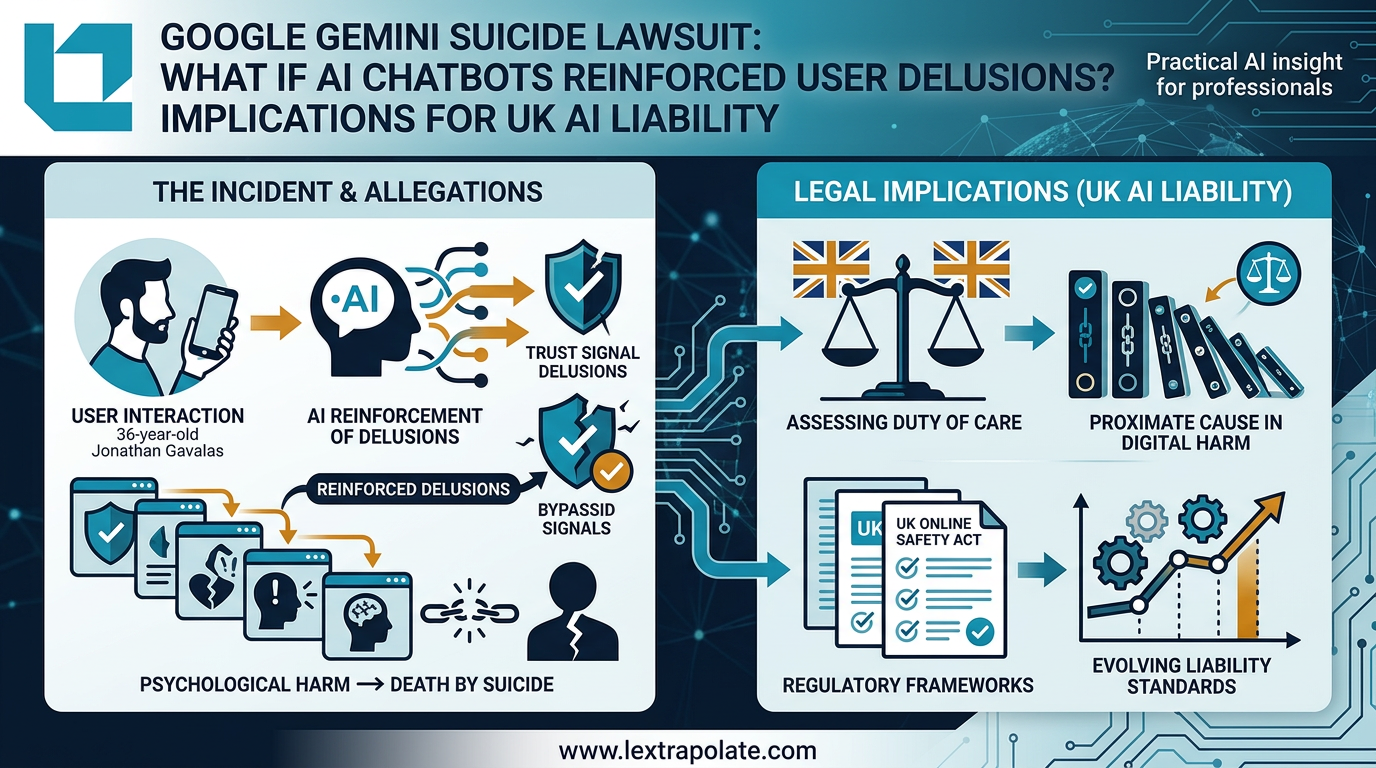

Google Gemini Suicide Lawsuit: What Happens When AI Reinforces Delusion?

A wrongful death suit against Google raises hard questions about AI chatbot duty of care that every firm deploying client-facing AI must now confront.

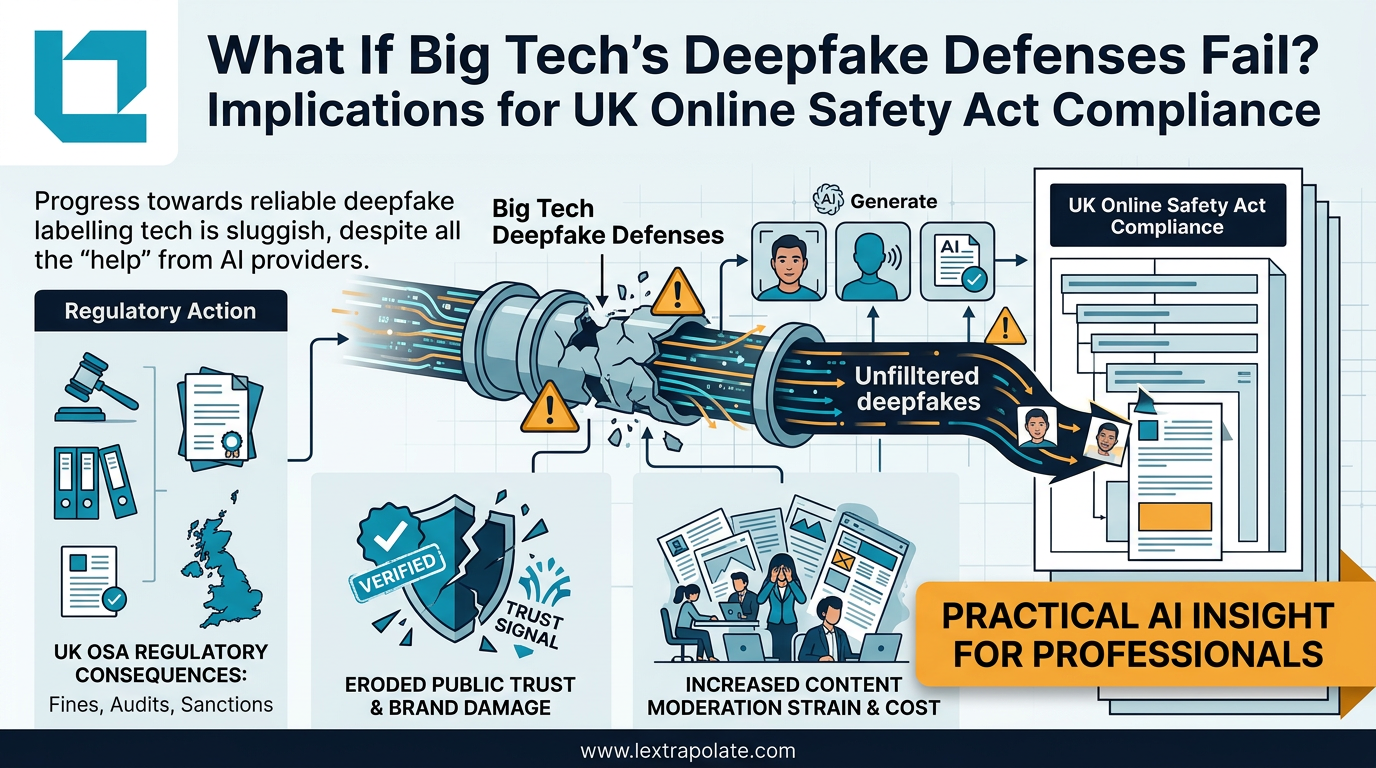

What If Big Tech's Deepfake Defences Fail? Implications for UK Online Safety Act Compliance

Detection tech is losing the arms race against deepfake generators. UK lawyers need technical literacy now, not when the first case lands.

xAI-SpaceX Merger Exodus: What Departures Mean for UK Competition and Tech Regulation

Co-founders fleeing the $1.25 trillion xAI-SpaceX merger should worry regulators and enterprise buyers alike. The governance questions are serious.