What If Your AI Tool Just Ended Your Career? The Citation Trap Lawyers Must Take Seriously

What if the tool you used this morning to speed up a submission just put your practising certificate at risk?

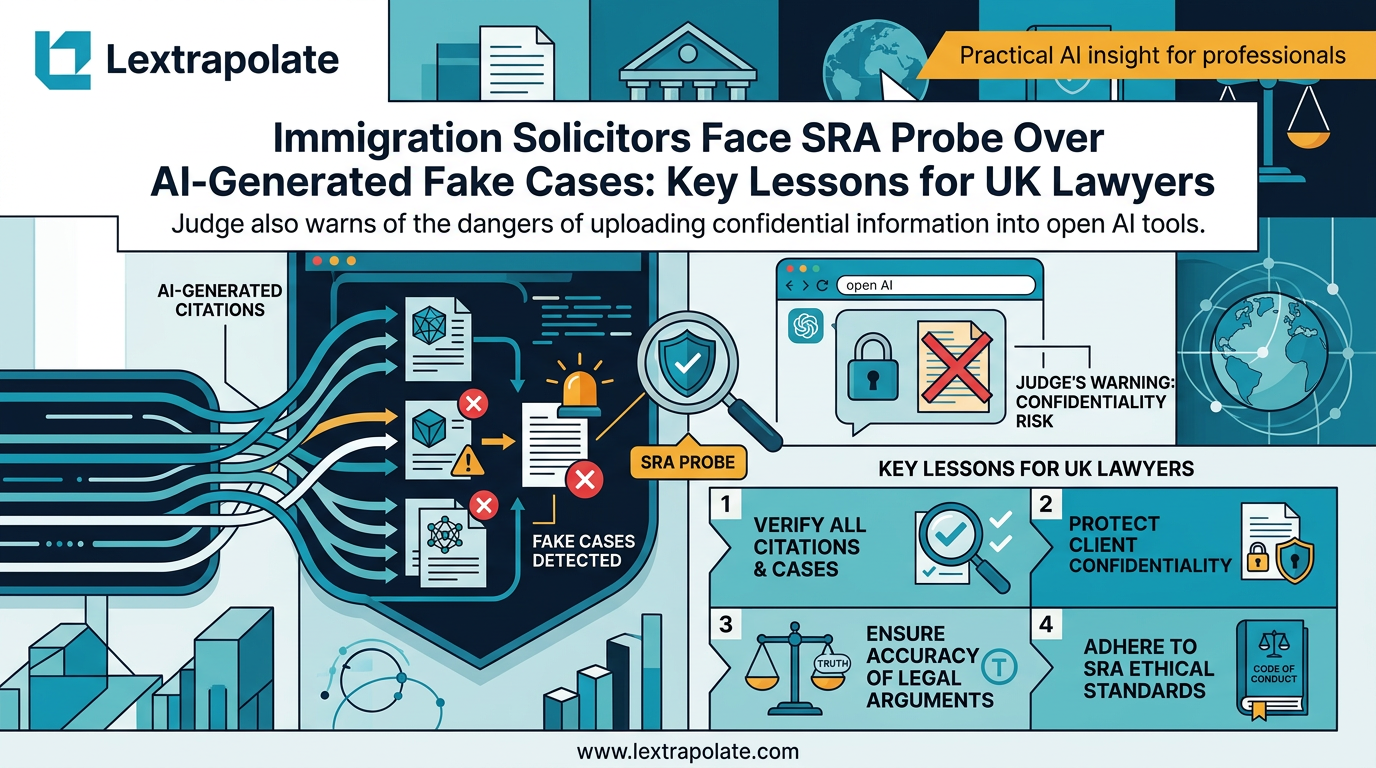

That is not a hypothetical for two immigration solicitors currently facing SRA investigation. Tahir Mohammed of TMF Immigration Lawyers and Zubair Rasheed of City Law Practice both had submissions before the Upper Tribunal containing false or irrelevant case citations. The Tribunal found those citations were suspected or admitted to be AI-generated. Neither case existed in the way cited. The judge referred both to the SRA.

This is not a story about AI being dangerous in the abstract. It is about two specific professionals, two specific regulatory referrals, and a pattern that is spreading fast enough to warrant a formal judicial warning.

What actually happened in the Tribunal

Upper Tribunal Judge Fiona Lindsley did not mince her words. The submissions contained authorities that either did not exist or bore no relevance to the propositions they were cited for. The Tribunal's view was that the most likely explanation was AI generation, specifically ChatGPT.

The Free Movement report of the judgment makes clear the Tribunal's concern extended beyond the citations themselves. The judge was troubled by the absence of any verification before the material reached the Tribunal. Counsel submitting authorities to a court carries a duty to confirm those authorities exist and say what they are said to say. That duty does not transfer to the AI tool. It stays with the lawyer.

The new Upper Tribunal immigration judicial review claim form UTIAC1, introduced in 2024, now requires a signed statement of truth that cited authorities actually exists, can be found at that citation, and supports the proposition of law for which it is cited. That procedural change was not accidental. The judiciary saw this coming. Some practitioners clearly did not.

The confidentiality problem compounds everything

Judge Lindsley's rebuke covered a second issue that has received less attention but carries equal regulatory weight.

Lawyers uploading client documents into open AI tools risk breaching confidentiality and legal privilege. ChatGPT in its standard consumer form is not a closed system. Data submitted to it may be used to train future models unless specific settings are configured. Most users do not configure those settings. Many do not know the option exists. (It is in settings, usually euphemised as “Improve model for everyone”; turn this off.)

The SRA Standards and Regulations require solicitors to keep client information confidential. Principle 6 and the duties under Chapter 6 of the Code of Conduct are unambiguous. Uploading a client's immigration file, witness statement, or instructions letter into a public AI tool almost certainly engages those duties. If a data breach occurs, the Information Commissioner's Office becomes relevant alongside the SRA.

Why this keeps happening

AI tools are extraordinarily good at producing text that looks authoritative. A hallucinated case citation does not announce itself as fictional. It arrives with a plausible name, a plausible court, a plausible date, and a plausible principle of law. To a busy fee-earner under time pressure, it can look identical to a genuine authority retrieved from Westlaw or Lexis.

The difference is that Westlaw and Lexis index real decisions. ChatGPT predicts text. It has no mechanism for distinguishing between a case that exists and a case that would exist if the pattern of its training data were followed to a logical conclusion. It does not lie deliberately. It simply has no concept of truth in the way a legal researcher does.

This is well-documented. The US case of Mata v Avianca, where lawyers submitted entirely fabricated citations generated by ChatGPT, reached the news in 2023. The Ayinde case in England demonstrated the same failure mode in domestic proceedings. The Upper Tribunal decision involving Mohammed and Rasheed shows the pattern has not stopped. The question is not whether it will happen again. The question is whether it will happen to you.

What this means

There are three things any practising lawyer should do immediately.

First, establish a verification rule for every authority cited in any document going to a court or tribunal. The rule is simple: find the case in an authoritative legal database before it goes in the submission. Not after. Not as a spot check on some citations. Every one. If you are supervising juniors or trainees who are using AI tools for research, that verification obligation is yours as much as theirs. The SRA's Standards and Regulations make supervisory responsibility explicit. It might seem obvious - read the case. This is not a new rule; it used to be that it was a risk to cite a case you had found in a practitioner text. There might be nuance the editors had not mentioned.

Second, audit which AI tools your team is using and what data is being submitted to them. If anyone in your firm is pasting client information into a consumer AI product, that practice needs to stop or be brought within a framework that addresses your confidentiality obligations. Enterprise versions of AI tools with appropriate data processing agreements exist. The consumer tier is not an appropriate vehicle for client work.

Third, treat AI output as a first draft of a research direction, not as a finished research product. AI can identify the issues, suggest the relevant legal framework, and accelerate the process of knowing where to look. It cannot replace the act of looking. Those are different functions, and conflating them is where the disciplinary risk lives.

The regulatory direction of travel

The SRA has been explicit that existing professional obligations apply to AI use. There is no AI exception to the duty of competence, no carve-out from confidentiality rules because a tool felt efficient, no defence based on the technology being new.

The Upper Tribunal's referrals of Mohammed and Rasheed are a signal. Regulators have the mechanism to act. The judiciary is alert to the pattern. The Law Society, Law Gazette, Free Movement and Law360 have all covered this story, which means it is not passing quietly.

Firms that have not trained their people on AI verification and data handling are carrying a risk they may not have priced. The cost of an SRA investigation is not only financial. It is reputational, and for an immigration practice serving vulnerable clients, it is also a harm to the people who most need competent representation.

The tools are worth using. The shortcuts are not. If you want to use AI properly in legal practice, including understanding what verification workflows should look like in your specific context, that is exactly what Lextrapolate's training programmes are built around. The gap between using AI and using it safely is not large. But it is consequential.

Sources

- 1Solicitors under probe after false case citations - Law Society

- 2Immigration solicitors face SRA probe over AI-generated cases

- 3Tribunal slams two immigration lawyers for suspected citation of AI-invented fake case law

- 4Judge Rebukes Solicitors For Using AI To Cite Fake Cases

- 5Solicitor faces probe after putting client documents into ChatGPT

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

AI Hallucinations in Court: Why UK Lawyers Face Criminal Liability and How to Avoid SRA Sanctions

UK lawyers citing AI-fabricated cases risk contempt of court charges. With 24 documented incidents and counting, professional competence now demands technical literacy.

How Many Lawyers Actually Understand AI? Fewer Than You Think.

Most lawyers say they use AI. Very few can explain what it actually does. That gap is not just embarrassing: it is a professional risk.

What If AI Training Became a Professional Obligation for Lawyers?

Hotshot and Legora's partnership raises a sharper question: when does AI training shift from good practice to regulatory requirement?